Getting it onto your local machine

Still with me? You must be else you wouldn't be reading this. I think. Anyway, you’ve dutifully read part 1 of this two part series and

converted an AWS EC2 instance to a VMWare Workstation (in my case, at

least) instance. So now the question is – how oh how oh how do you get a

BIG file off of the Cloud?

This

is not a hard step but it takes a long time because of the size of the

files. Note that if you get rid of those media files or if you have a

faster connection than my DSL line it isn’t quite so painful.

Compress it to make it fast(er)

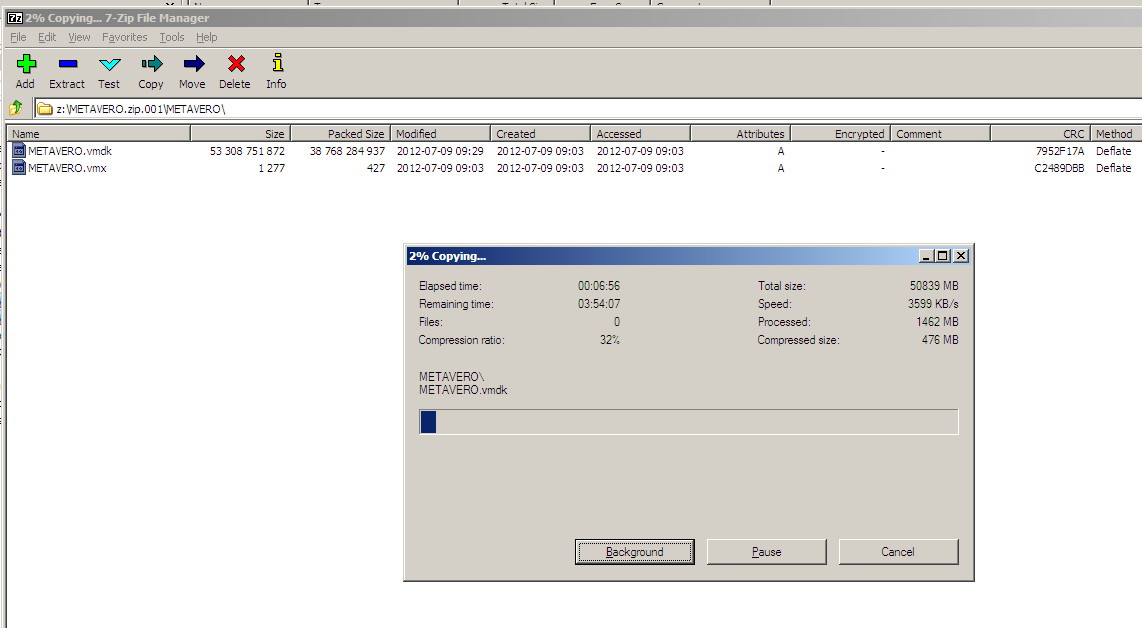

Although I suppose you could do this without using the installed 7-Zip compression program, I can’t see why you would.

Dan

did a bunch of experiments with getting the best performance out of

7-Zip, and found that the Lzma2 method with 24bit word and 256mb block

and 8 threads was the fastest options settings.

I

am not going to show the individual steps for doing this – you can just

take the defaults on the compression but it will take longer/be

bigger/be slower on the download but I suggest trying Dan’s settings.

Downloading the compressed files

I’ve done this four different (What, you think I know all of this stuff before I write it down? If only. Nope, I have to blunder through the options until I get to the right answer.) ways:

- Transferring

the file(s) from the AWS instance to a FTP server and then download

them (this got me a nastygram from my internet provider because of size

and download threads which ate up the box for everyone – whoops, so

firmly rejected on my part).

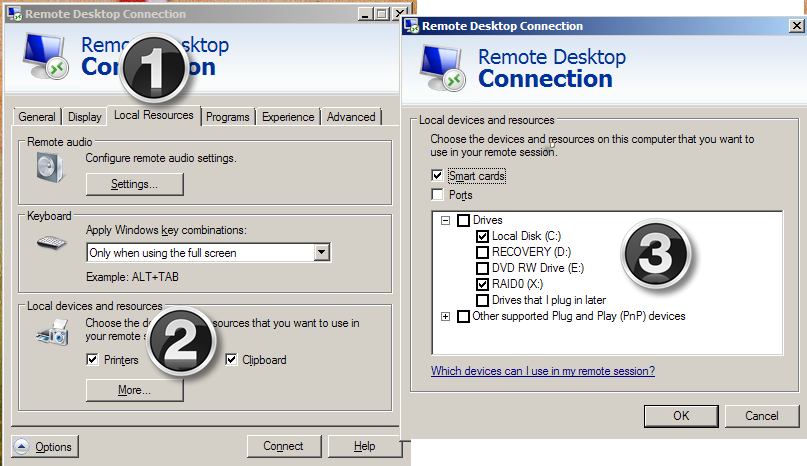

- Use Terminal Services to transfer the files. Just follow the three steps below in the TS client. You must set this before you connect to your instance. Your local drives will then look like mapped drives from AWS.

- Setting up an FTP server on your AWS instance and downloading from there. Note that you will need to open up the default port of 21 in your AWS Security Group/firewall.

- Use AWS’ S3 – This is the way I did it. It’s a little confusing at first, but Cloudberry Explorer makes it dead simple.

Using Simple Storage Service (S3)

Given

the other three (two really, I would avoid the first approach of

sending the file (or files if you split them up) to an external ftp

source) approaches, why use S3?

I

used 7-Zip to both compress the VMWare files and to split it up into

DVD sized (4.7 gigabyte) files. I have had (Oracle e-delivery is where

I’ve experienced this before) issues with my wonderful (can you tell it

annoys me?) DSL connection. What happens is that the files get

downloaded, look like they’re valid, but in fact are corrupt.

S3

allows me to redownload parts of my VM that fail. It’s a pain to do

that, and slow, and it costs (S3 charges you for downloads – pray that

you have a better internet connection than me) but it is better than

downloading everything over and over again. Also, it gives me (and you,

too) a chance to learn a new technology. I should mention that John

Booth mentioned S3 as an approach – as always, he has some really great

ideas and I am at least smart enough to listen to them. :)

I am not going to provide a detailed tutorial on S3 but suggest that you read here.

I essentially treat S3 as a super easy to set up FTP Cloud server that

does not require me to configure the AWS instance’s IIS settings. Note

that most Cloud-based services such as OpenDrive or Box limit the size

of uploaded files. My provider, OpenDrive, has a file limit of 100

megabytes per file, so with my 4.7 gigabyte files, I really had to come

up with another approach.

If you are interested in other tools other than Cloudberry, have a read of this thread.

If you are interested in using Cloudberry, read this very nice tutorial.

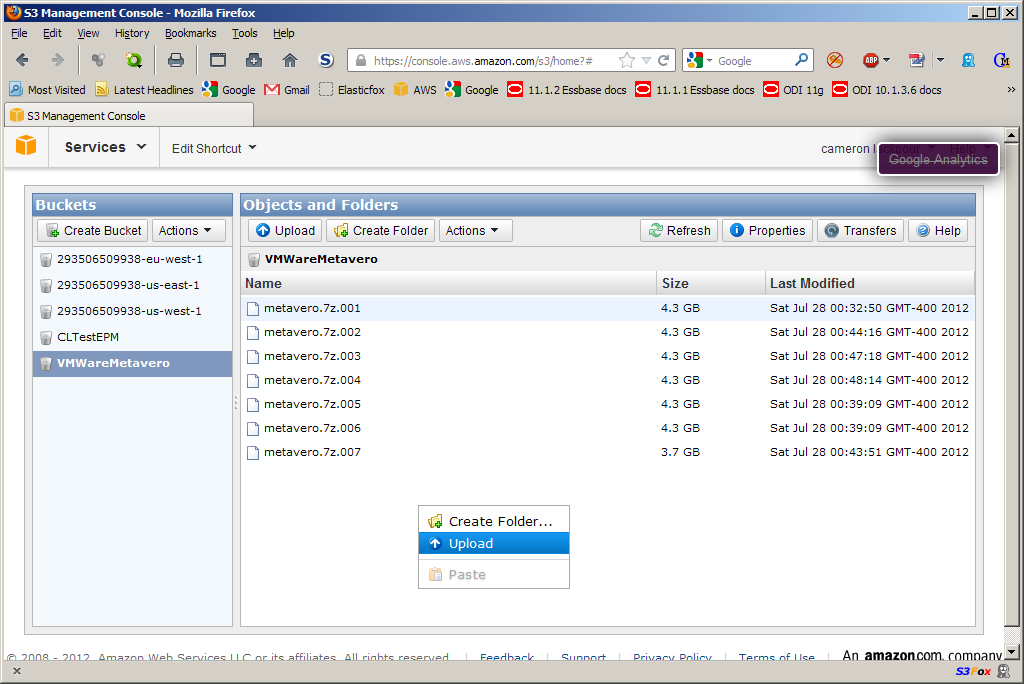

NB

– You can also use the AWS console’s S3 component to move the data

around – that’s what Dan did. It is as simple as opening up the AWS

console on your AWS instance (sort of like a mirror facing a mirror) and

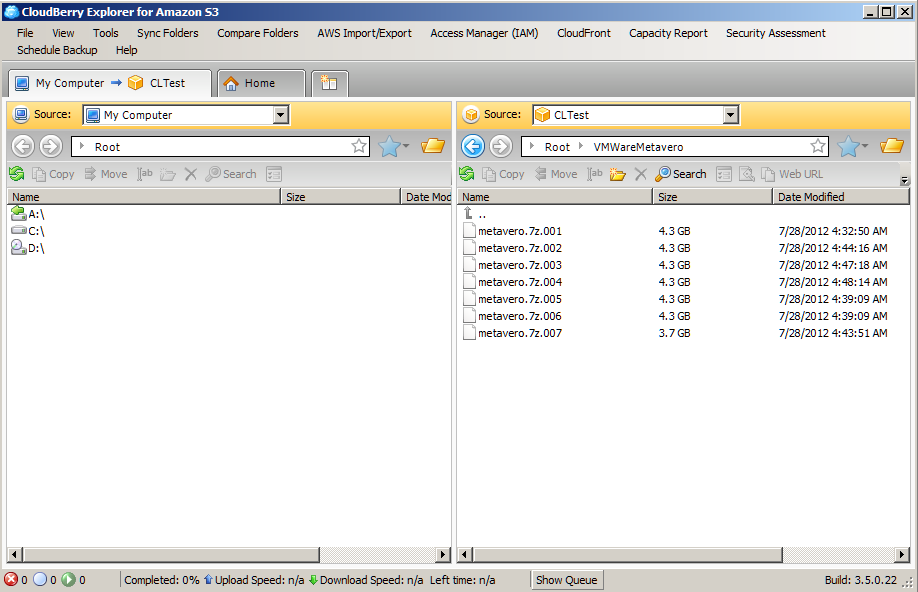

then right clicking inside your bucket like the below: I did the same thing via Cloudberry to a S3 bucket I called VMWareMetavero.

I did the same thing via Cloudberry to a S3 bucket I called VMWareMetavero. To get it onto my laptop, I installed Cloudberry again and then downloaded it to my external hard drive.

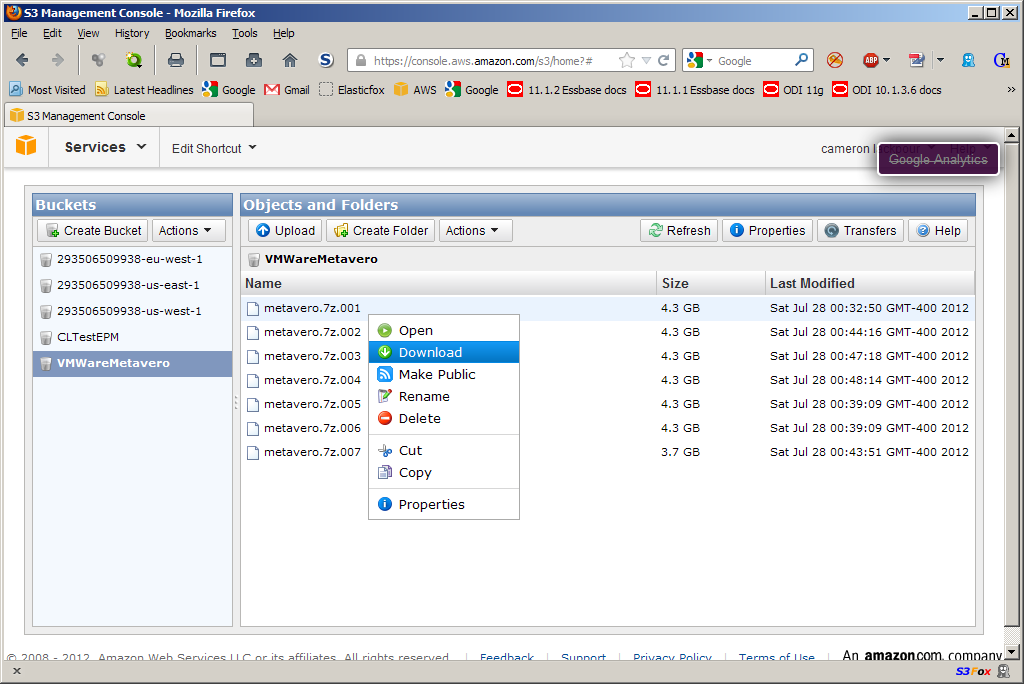

Alternatively, I could have just gone into S3 via the AWS console and done this:

To get it onto my laptop, I installed Cloudberry again and then downloaded it to my external hard drive.

Alternatively, I could have just gone into S3 via the AWS console and done this: Cloudberry made it a little less painful so I went that way.

That’s it.

Cloudberry made it a little less painful so I went that way.

That’s it.

Unzipping the files (or even combining them)

Once

you have the download to the Ground completed, 7-Zip needs to be

installed on your laptop if not already done. And once that is done,

decompress. Again, this takes a while.

Avoiding the Blue Screen of Death (BSOD)

At this point you have:

- Removed the media files from c:\media unless you really, really, really want them. You might, but probably not.

- Converted the 11.1.2.2 AMI to a VM Workstation VM

- Compressed that VM on a using 7-Zip’s Lzma2 method with 24bit word and 256mb block and 8 threads.

- Downloaded that through S3 (or whatever method you prefer but that’s the easiest) to your laptop

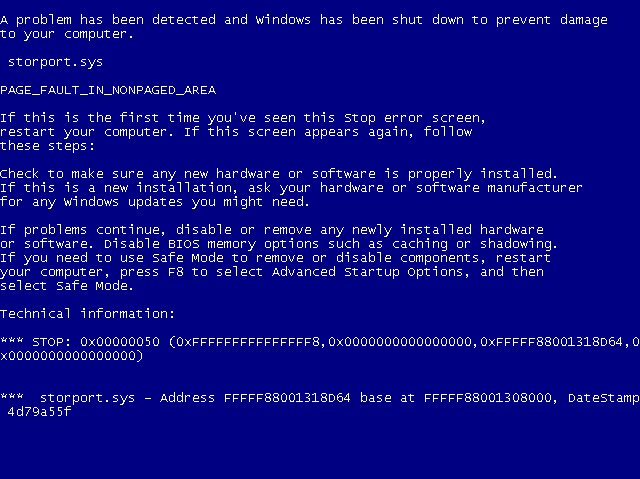

So

you’re all ready to go, right? Uh, no, because here’s what happens

when you try to fire up that VM in Workstation.

Auuuuuuuuugggggggghhhhhhhh!!!!!!!!!! It’s the Blue Screen of

Death!!!!!!!!!!! And

not just the BSOD, but a BSOD that will immediately reboot Windows so

that you have a lovely endless loop of BSODs. Fun times, fun times.

At

least it’s fast – it took me about five tries (so we are talking 30

minutes of reboots) to get that screenshot with Snagit. It will flash

very, very, very quickly on your screen. Is there a cure? You betcha.

And

not just the BSOD, but a BSOD that will immediately reboot Windows so

that you have a lovely endless loop of BSODs. Fun times, fun times.

At

least it’s fast – it took me about five tries (so we are talking 30

minutes of reboots) to get that screenshot with Snagit. It will flash

very, very, very quickly on your screen. Is there a cure? You betcha.

The cure for the Blues

If

you want it all in one succinct (but not terribly well explained or at

least I couldn’t follow the directions until I did it three times)

thread, read this on the VMware support forum. I’m going to show it to you step by step and will make it a tiny bit less painful.

Just

to be completely up front, I am taking everything I read in that thread

and putting pictures to it – the brains behind figuring this out belong

solely to ivivanov and leonardw who figured all of this out.

The issue is the RedHat SCSI Bus Driver

(really all of the Red Hat services, all of which start with “rhel”)

despite the storport.sys message in the BSOD. Who would believe that an

error message is misleading or doesn’t give all of the information you

need? Why I would, and so should you. The RedHat services are part of

the EC2 Amazon offering and simply don’t work (why I know not as I am no

hardware expert, but I can certainly attest to their super-duper not

working). It blows up Windows 2008 R2 on VMWare real good.

Richard Philipson

tried out part 1 and pointed out (yep, some people actually read this

blog, thankfully otherwise this is the most involved echo chamber ever)

that the ec2config service is superfluous (and causes a wallpaper error

on startup) and that those RedHat services are “a set of drivers to

permit access to the Xen virtualized hardware presented by Amazon EC2 to

the guest operating system.” It makes sense that without Xen under the

covers, there is no Xen virtualized hardware.

NB

-- There is a separate intermittent error in VMWare if you have more

than two cores to your laptop. If you are on a machine with more than

two cores, you may get a multiple processor error (a different BSOD).

If that is the case, you should set the number of processors to 1 and

the number of cores to 2 in VMWare.

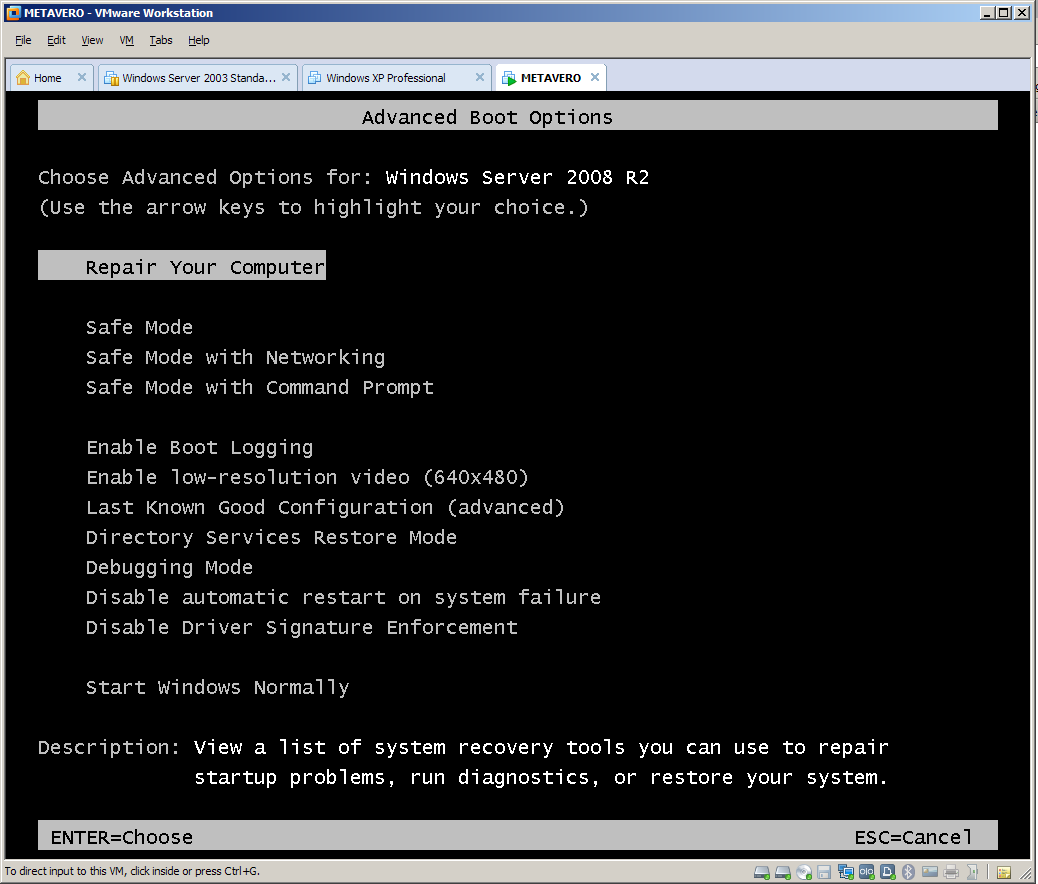

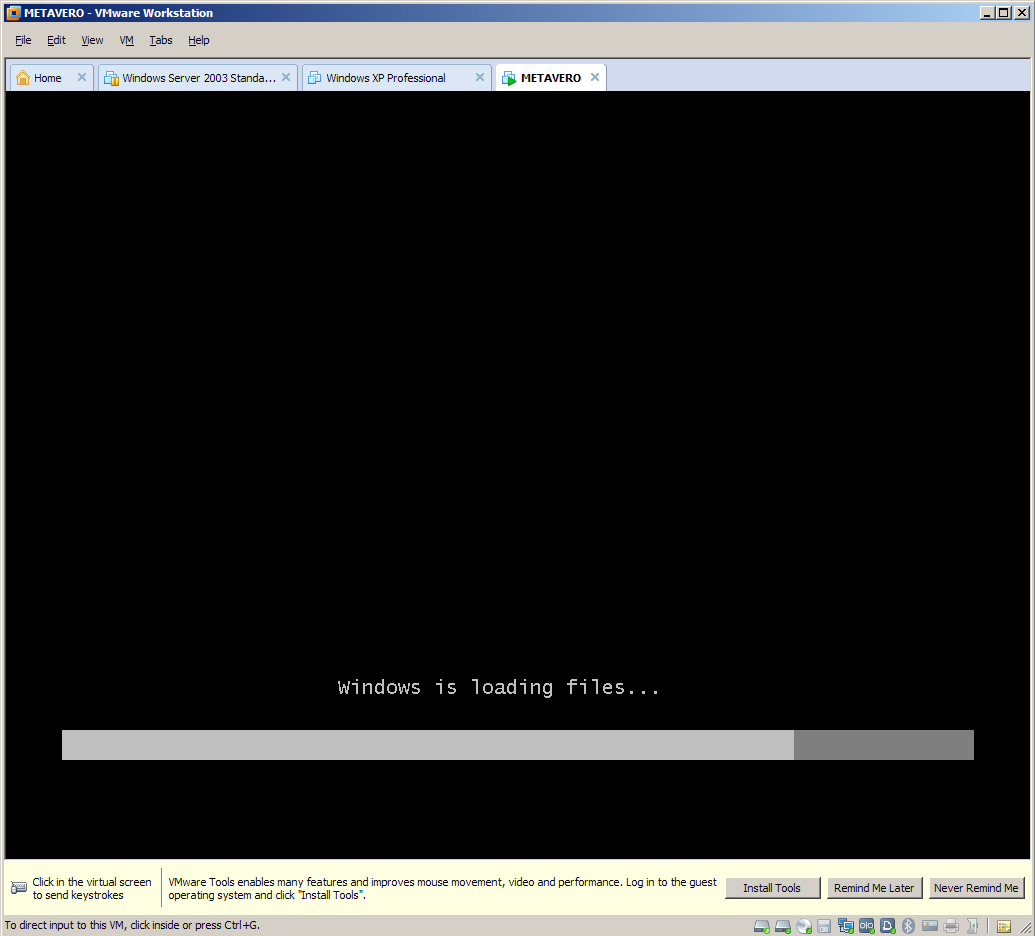

Step 1 – Getting into Boot Options

Open up your nifty new VM in VMWorkstation and start it.

As

it starts up hover your mouse over the VMWorkstation window and press

Ctrl+G. You need the VM to get control of the keyboard/mouse as you are

going to be holding down the F8 key. If your Windows host has control,

F8 will toggle a selector bar in the VMWorkstation application and you

will not be able to get into Advanced Boot Options. It’s a total Pain

In The You Know What.

No worries if you don’t get it the first time as the Metavero VM will crash very quickly indeed. :) A VMWorkstation bar will pop up telling you to install VMWare Tools. Ignore that for now but you will need to install this.

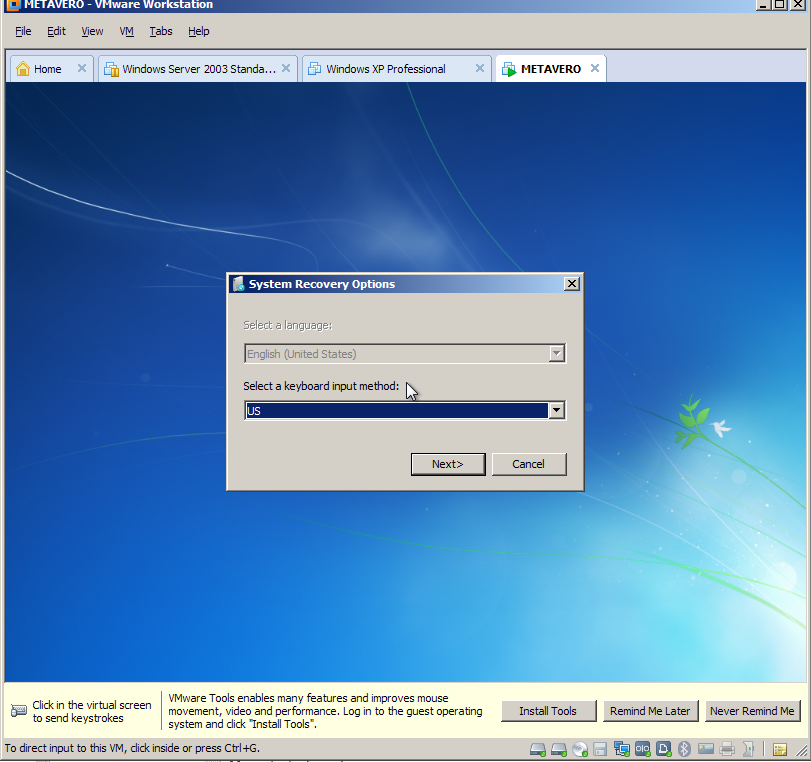

A VMWorkstation bar will pop up telling you to install VMWare Tools. Ignore that for now but you will need to install this. Step 2 – Go through the System Recover Options

First select a keyboard.

Step 2 – Go through the System Recover Options

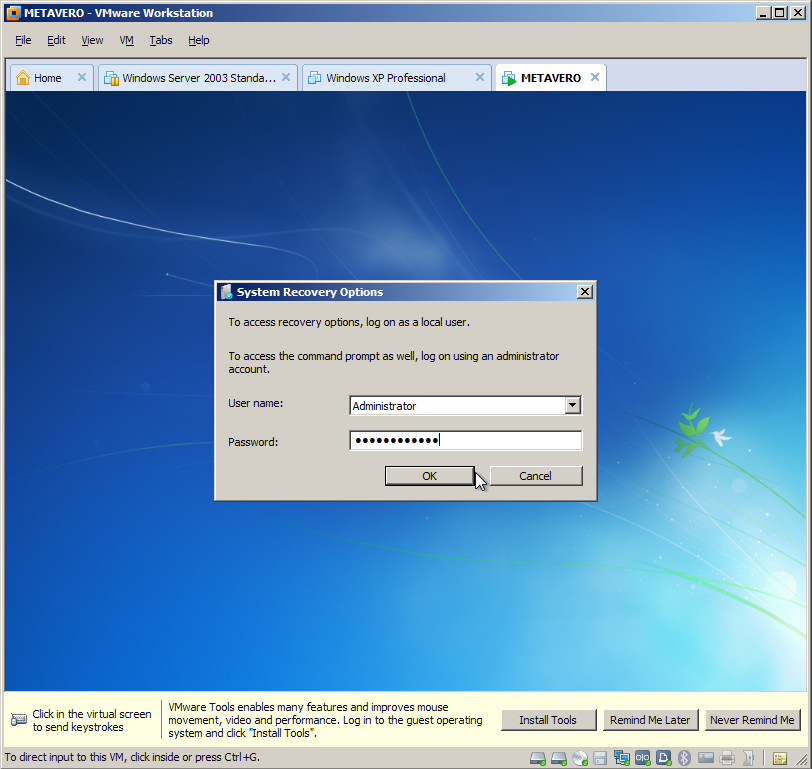

First select a keyboard. Then log in. The cool thing is this is just like the AMI – username Administrator, password epmtestdrive.

Then log in. The cool thing is this is just like the AMI – username Administrator, password epmtestdrive. Step 2 – Run a Command Prompt and then Regedit

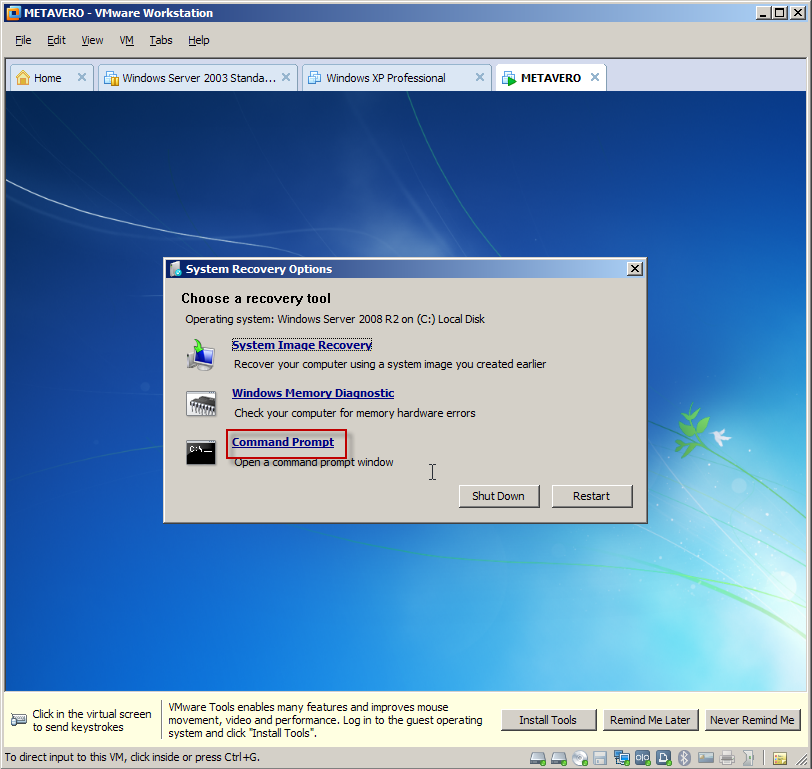

Select Command Prompt.

Step 2 – Run a Command Prompt and then Regedit

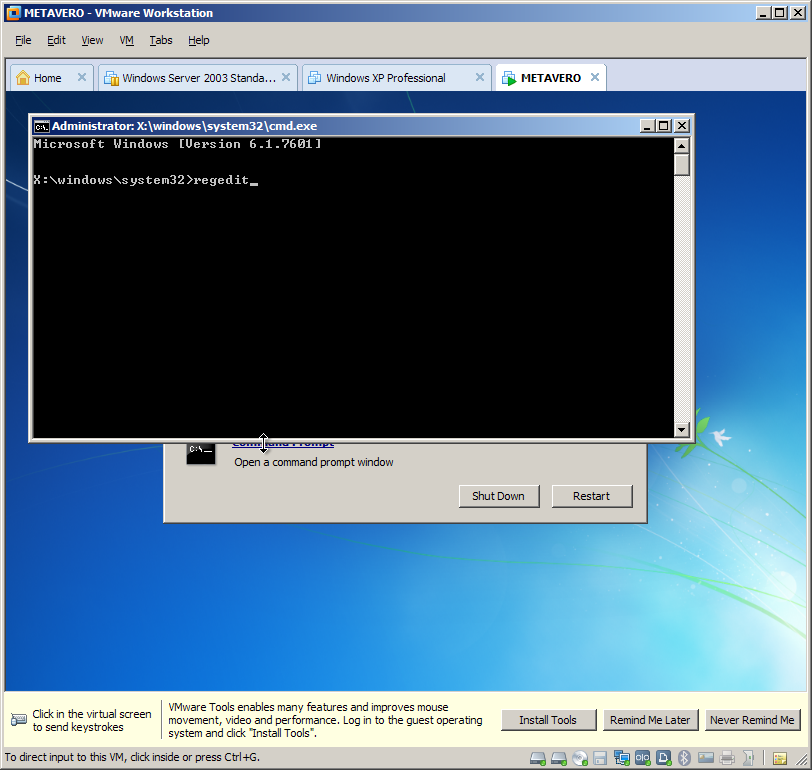

Select Command Prompt.  You will then run Regedit from x:\windows\system32.

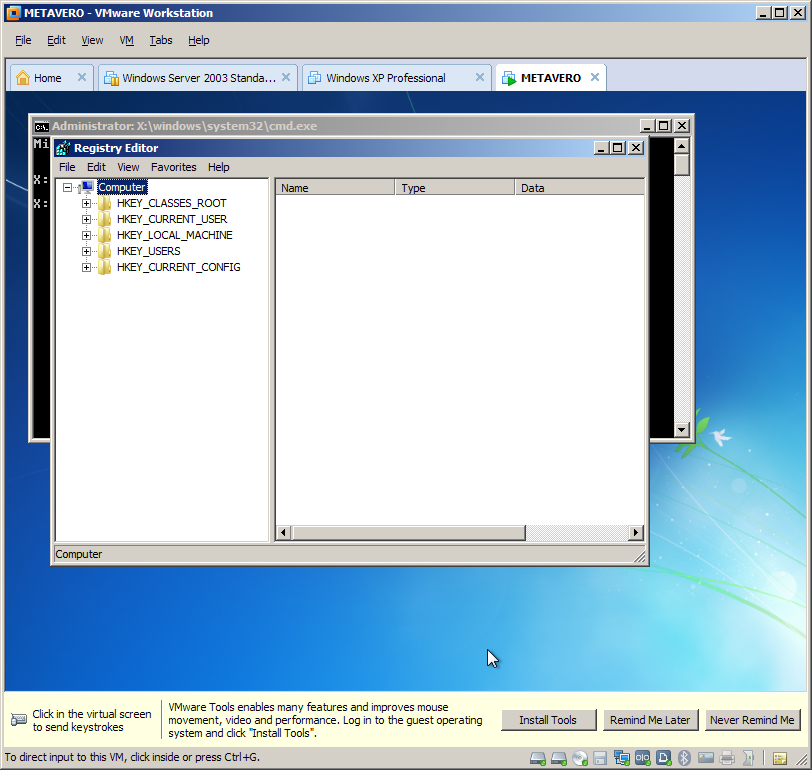

You will then run Regedit from x:\windows\system32. Here it is:

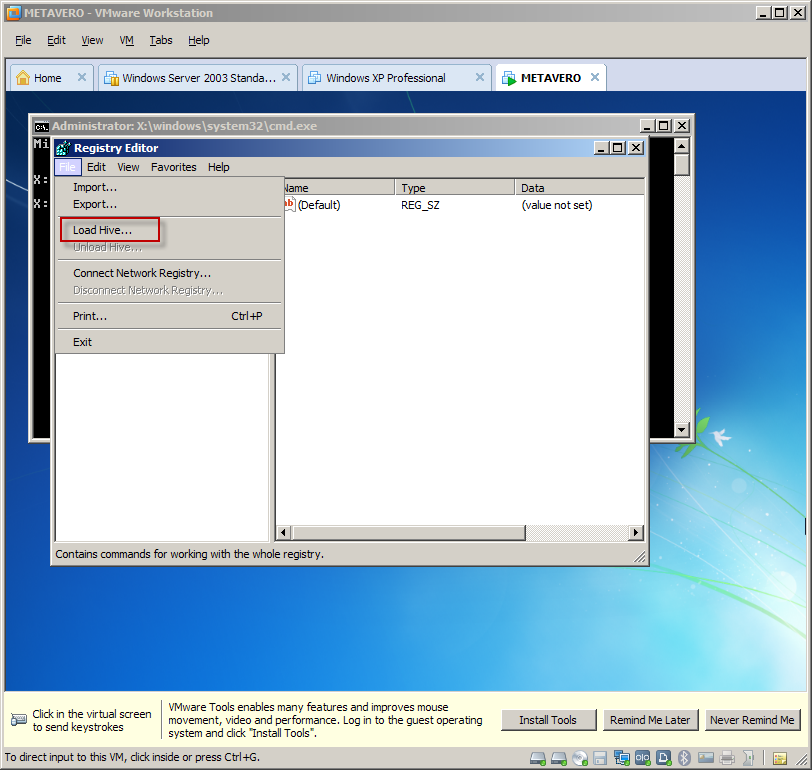

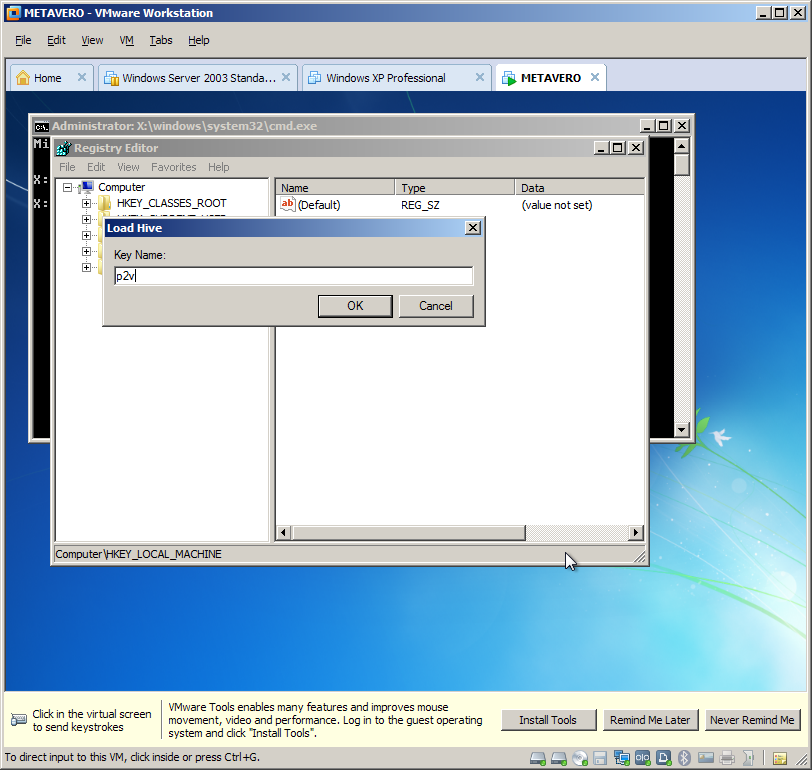

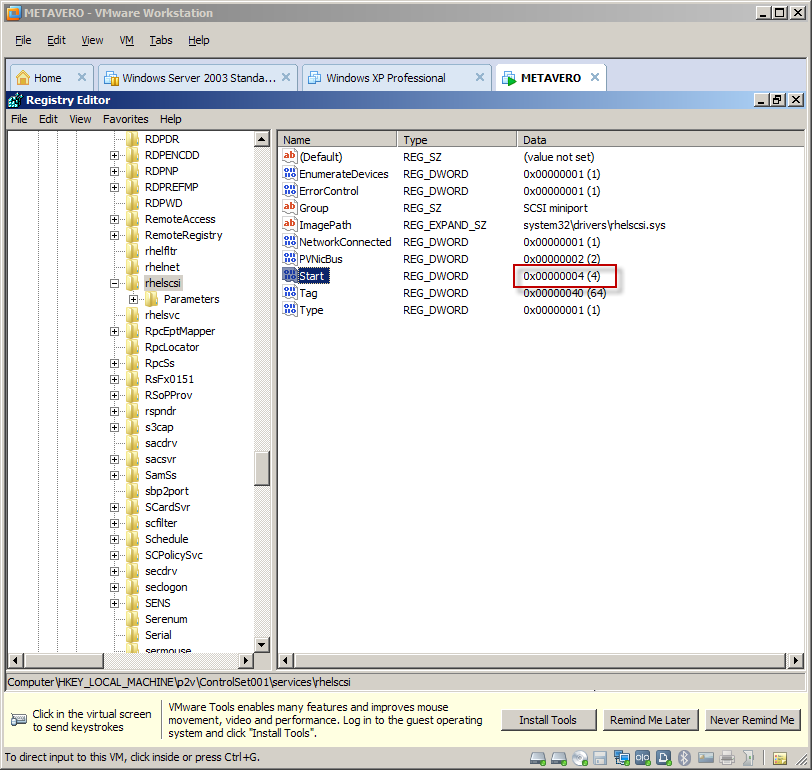

Here it is: Click on HKEY_LOCAL_MACHINE and then File->Load Hive.

Click on HKEY_LOCAL_MACHINE and then File->Load Hive. Navigate to c:\windows\system32\config and select the SYSTEM file and click on Open.

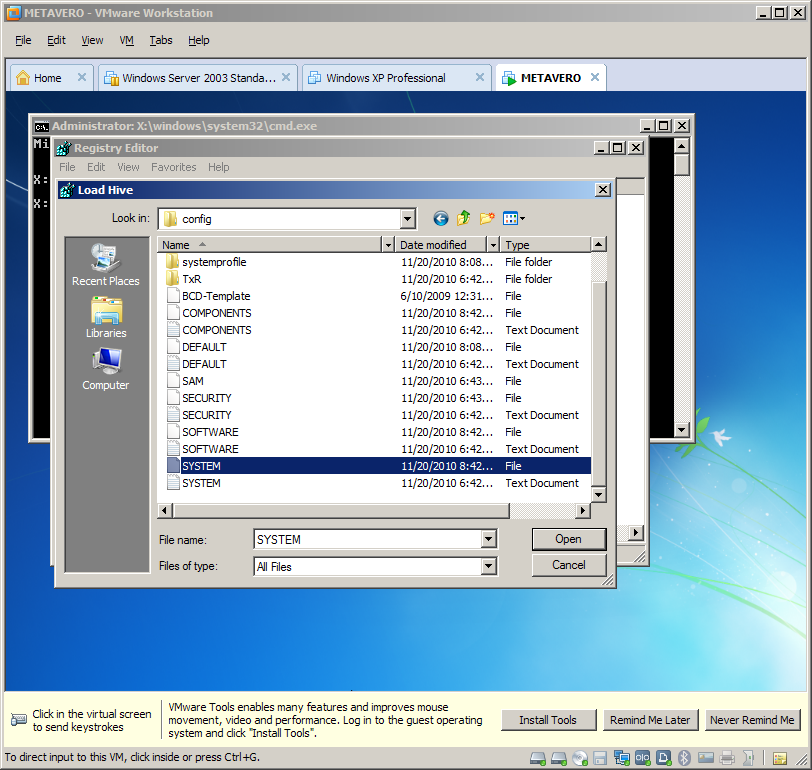

NB – This must be on the C: drive, not the X (that’s the repair drive). Here’s what x:\windows\system32\config looks like. Note the two SYSTEM files. You do NOT want this as it does not contain the services.

Navigate to c:\windows\system32\config and select the SYSTEM file and click on Open.

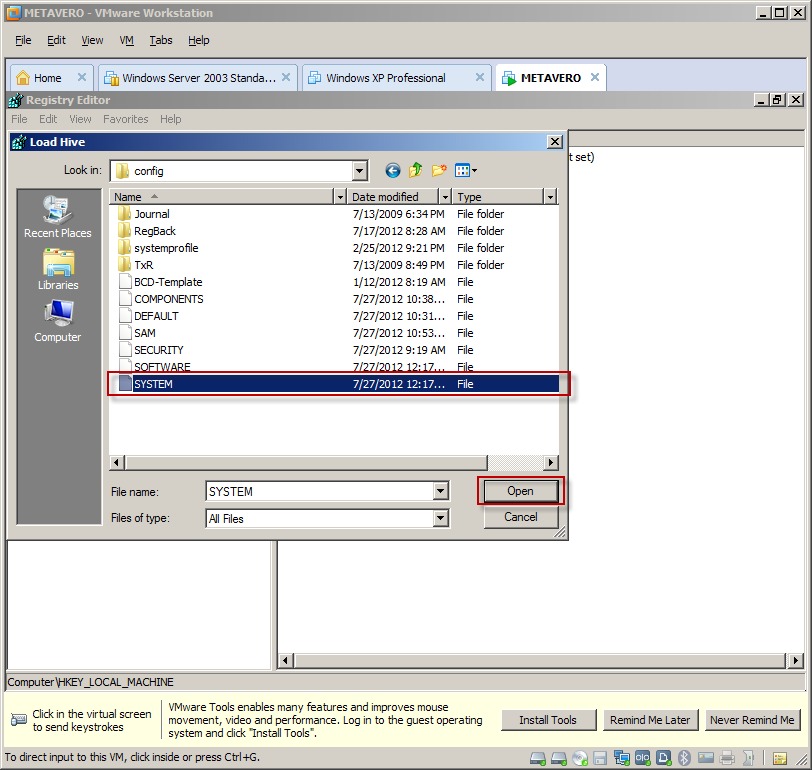

NB – This must be on the C: drive, not the X (that’s the repair drive). Here’s what x:\windows\system32\config looks like. Note the two SYSTEM files. You do NOT want this as it does not contain the services. What you do want to see is this and it’s only available off of the C drive:

What you do want to see is this and it’s only available off of the C drive: Type in “p2v” into the Key Name field and click OK.

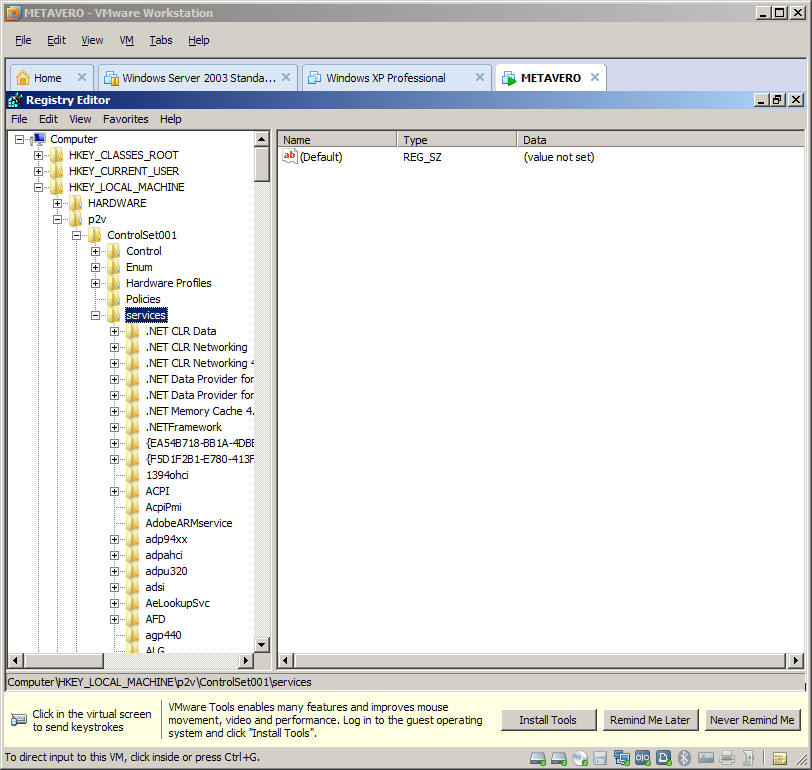

Type in “p2v” into the Key Name field and click OK. Navigate to HKEY_LOCAL_MACHINE\p2v\ControlSet001\services.

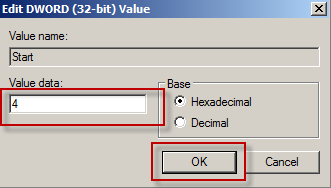

Navigate to HKEY_LOCAL_MACHINE\p2v\ControlSet001\services. For

each of the rhelfltr, rhelnet, rhelscsi, amd rhelsvc services, click on

the service in question and select the Start parameter.

For

each of the rhelfltr, rhelnet, rhelscsi, amd rhelsvc services, click on

the service in question and select the Start parameter.

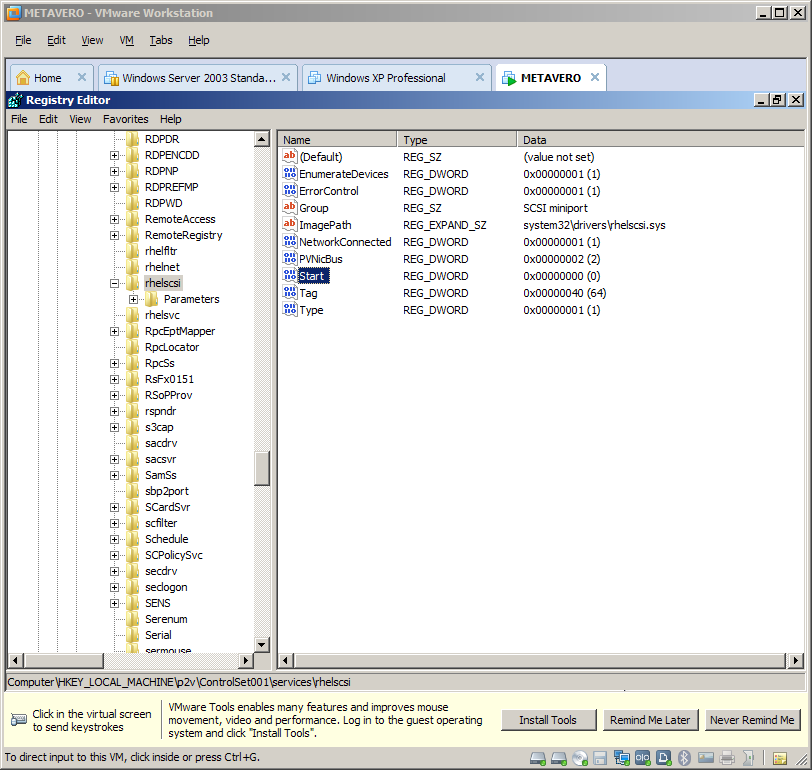

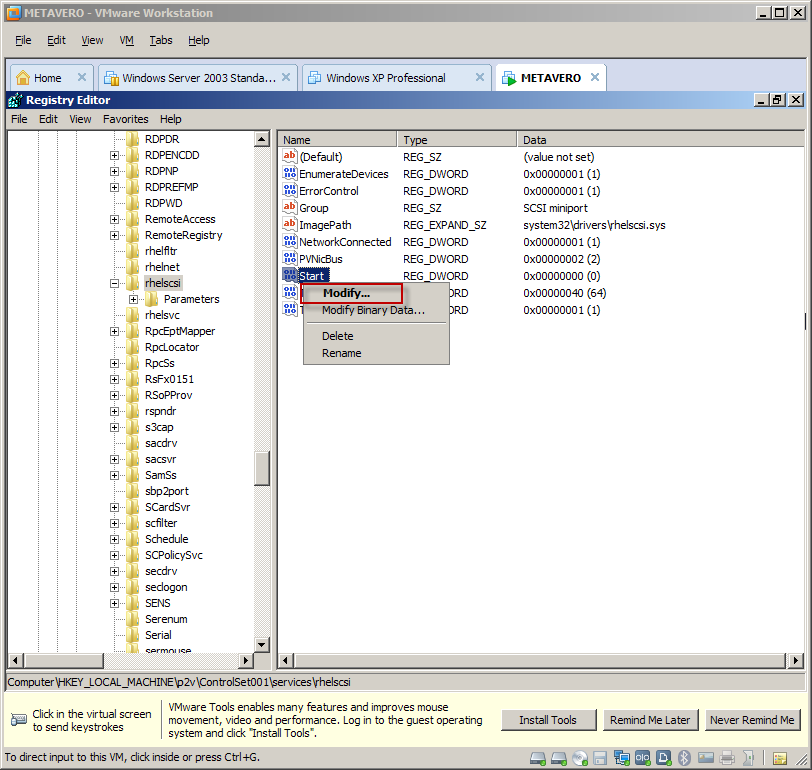

In

the below screenshot I have selected rhelscsi. Note that there is some

discussion on that VMWare thread that only rhelscsi needs to be

disabled. I’ve tried that and sometimes it works and sometimes it

doesn’t. Mu suggestion is to disable all four.

In

the below screenshot I have selected rhelscsi. Note that there is some

discussion on that VMWare thread that only rhelscsi needs to be

disabled. I’ve tried that and sometimes it works and sometimes it

doesn’t. Mu suggestion is to disable all four. Right click on Start, select Modify, and change the value from 0 (or whatever) to 4 which disables the service.

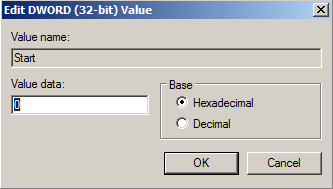

Right click on Start, select Modify, and change the value from 0 (or whatever) to 4 which disables the service. From this:

From this:

To this and click OK:

To this and click OK:

Note the value of 4:

Note the value of 4: Do it again for rhelfltr, rhelnet, and rhelsvc. All of these services need to be stopped.

With all four services disabled, select the key again.

Do it again for rhelfltr, rhelnet, and rhelsvc. All of these services need to be stopped.

With all four services disabled, select the key again.

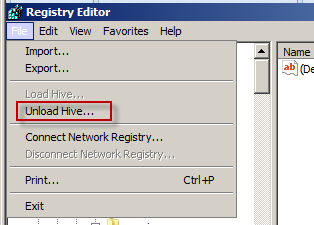

Then select Unload Hive.

Then select Unload Hive.

Select Yes in the confirmation dialog box.

Select Yes in the confirmation dialog box.

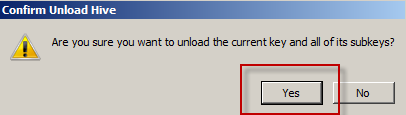

Minimize Regedit, and then select Shut Down. You will then restart the VM.

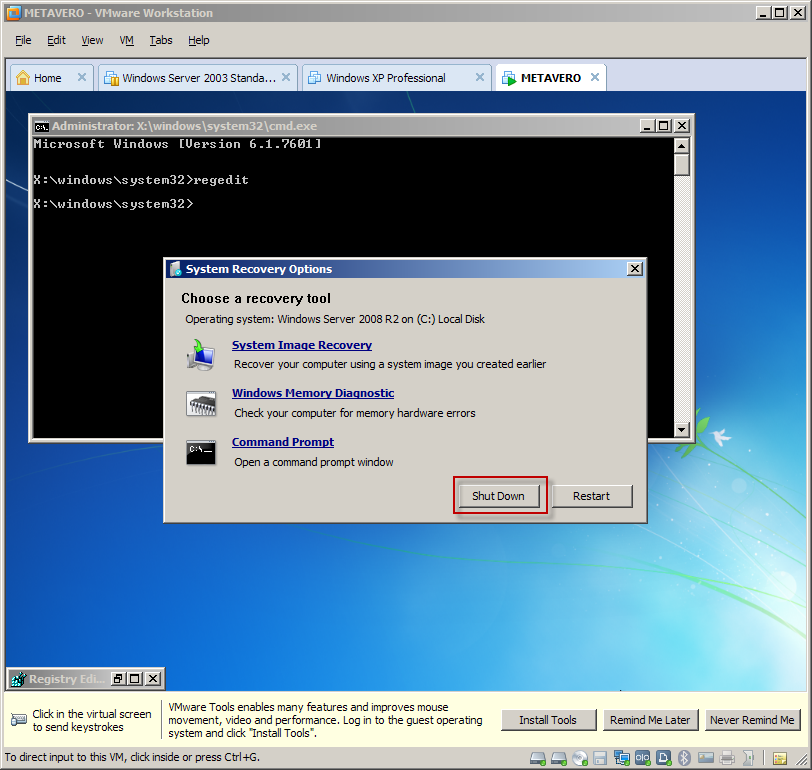

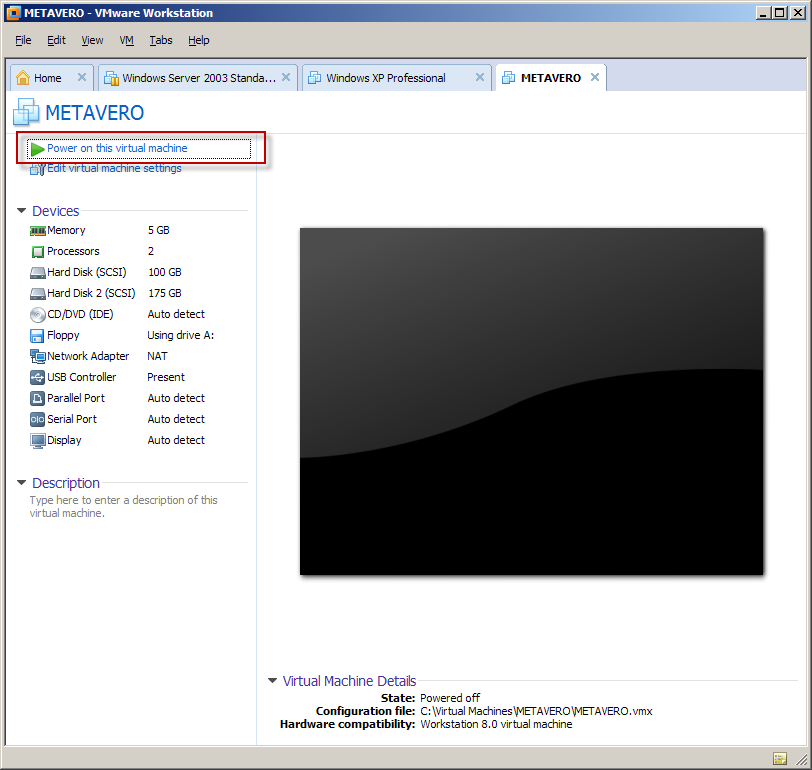

Minimize Regedit, and then select Shut Down. You will then restart the VM. Start the Metavero (that’s what I named it) VM back up.

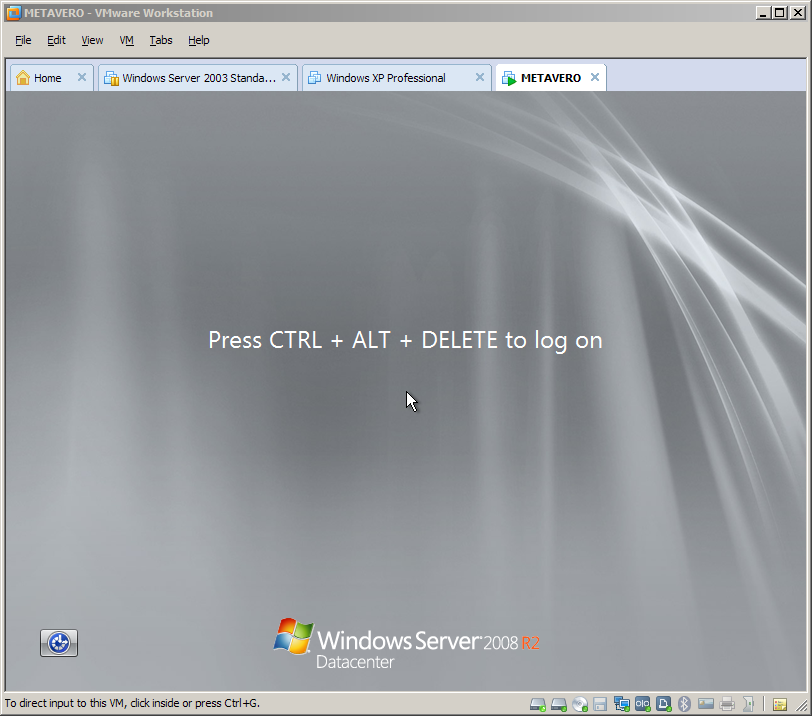

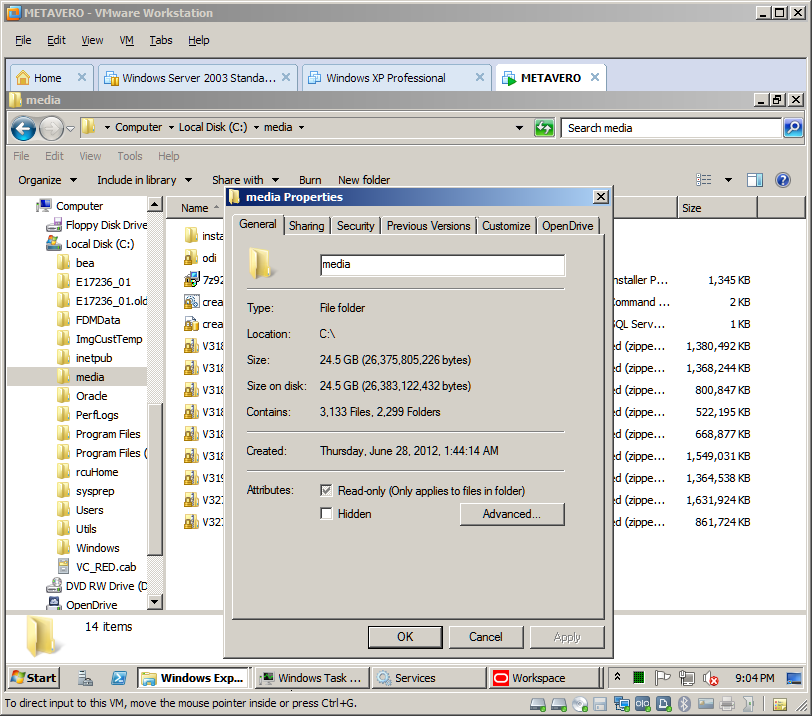

Start the Metavero (that’s what I named it) VM back up. Ta da, you are now running (and not BSODing) Windows 2008 R2:

Ta da, you are now running (and not BSODing) Windows 2008 R2: In VMWorkstation, VM->Send Ctrl+Alt+Del to get the login.

NB – You can also hit Ctrl+Alt+Insert to get the same thing.

In VMWorkstation, VM->Send Ctrl+Alt+Del to get the login.

NB – You can also hit Ctrl+Alt+Insert to get the same thing. And there you are:

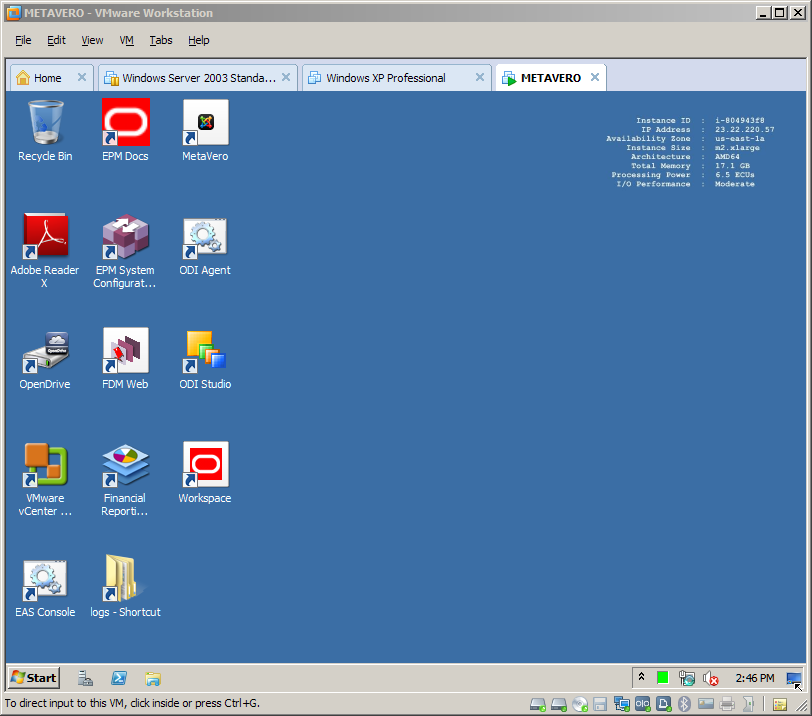

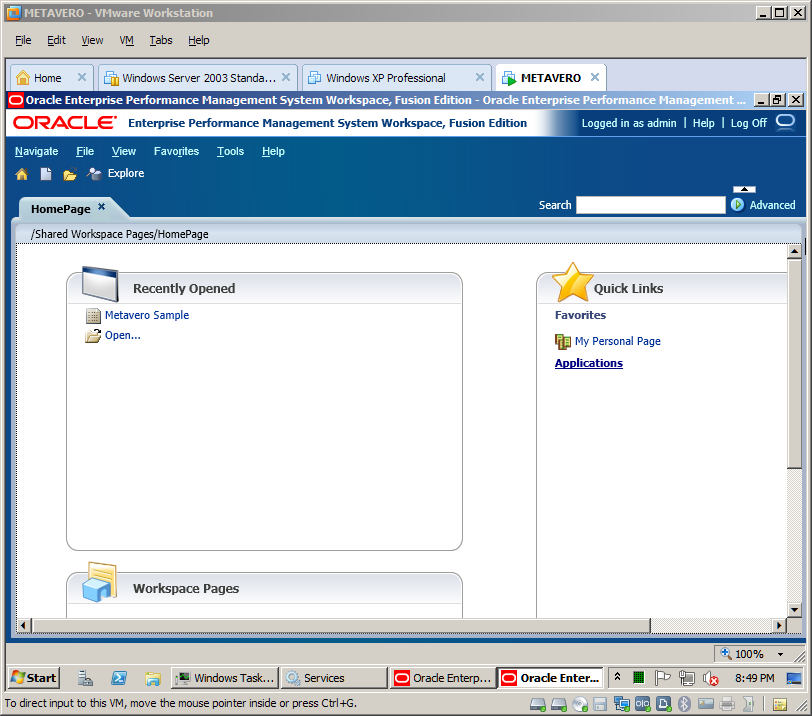

And there you are: And finally (at least on my laptop, there is a fair amount of time before this all boots up):

And finally (at least on my laptop, there is a fair amount of time before this all boots up):

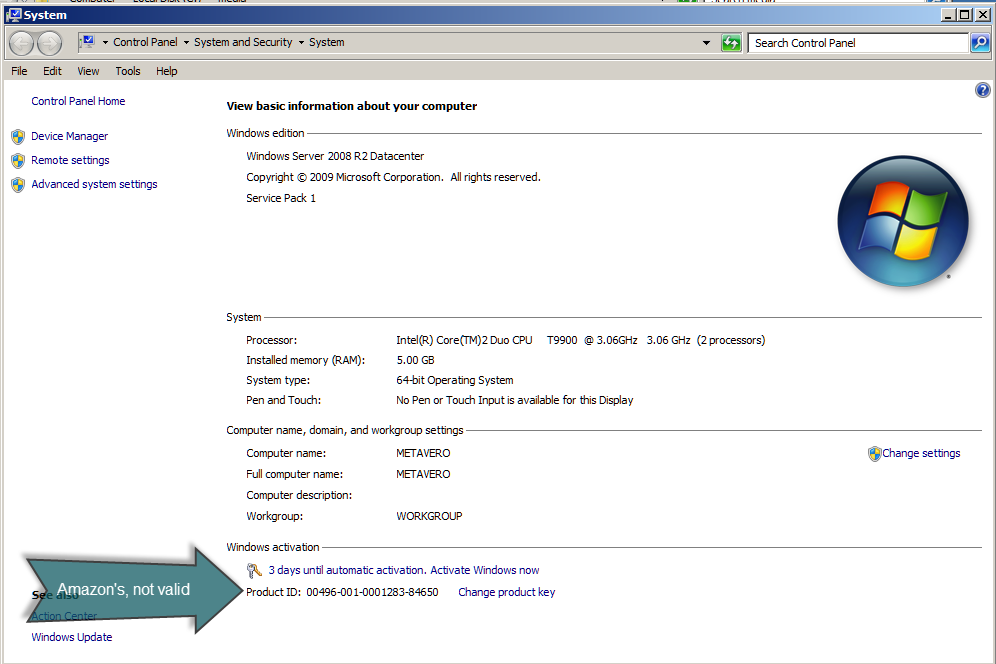

Don’t forget to activate Windows

You have three days to apply that valid key for Windows 2008 R2 Datacenter. No, I am not going to give you a valid license key. But I have given you a lot of ways to get one, all of them legal.

Interestingly, Microsoft Action Pack (to which both Dan and I belong) does not

support 2008 R2 Datacenter (bummer for those of us with a MAPS

subscription), but with MAPS you get TechNet (after signing up for it),

which does

allow you to sign up for Technet for free, and then one can get a valid

2008 R2 Datacenter key. Whew. Thanks to Dan for figuring this out as

it was not exactly straightforward.

If

you are on the fence between MAPS and Technet, note that Technet

Professional only costs $349 for the first year but MAPS (you do have to

qualify as a partner) gives mulitple internal use licenses. You

decide.

No, I am not going to give you a valid license key. But I have given you a lot of ways to get one, all of them legal.

Interestingly, Microsoft Action Pack (to which both Dan and I belong) does not

support 2008 R2 Datacenter (bummer for those of us with a MAPS

subscription), but with MAPS you get TechNet (after signing up for it),

which does

allow you to sign up for Technet for free, and then one can get a valid

2008 R2 Datacenter key. Whew. Thanks to Dan for figuring this out as

it was not exactly straightforward.

If

you are on the fence between MAPS and Technet, note that Technet

Professional only costs $349 for the first year but MAPS (you do have to

qualify as a partner) gives mulitple internal use licenses. You

decide.

So what do we have?

Well, in the case both the case of Dan and me, slightly different outcomes.

In Dan’s environment, running on a 24 gigabyte laptop, he has a pretty awesome EPM installation.

In

my world, running on an 8 gigbyte laptop, I pretty much have an

unusable EPM installation because my host laptop simply doesn’t have

enough horsepower. Although I do have a nice blog post. :)

Based on our tests, you simply must have a 16 gigabyte laptop to make this work acceptably.

What’s the right choice – the Cloud or the Ground?

As

I wrote above, if you don’t have a multiprocessor, 16+ gigabyte laptop,

with plenty of disk space, you can pretty much forget this approach. A

valid Windows 2008 R2 Datacenter key would be nice as well.

Assuming

that you do have the above, is the Ground worth it? I think the

answer, despite the pain, effort, and time (I’m pretty sure this must be

a world record for me for the length of a single blog post) is, “Yes,

absolutely!”

You

get a professionally installed EPM instance that is right there on your

laptop/PC without the AWS charges. That’s pretty cool. And you (and

Dan and I) got to perform, and learn, a whole bunch of tools that are

pretty darn useful. All I can say is that I will be getting a Dell

Precision 4600 or 4700 in the near future. That’s putting money where

my mouth is.

I hope you enjoyed the multiple hacks.

And a big thanks to Dan Pressman and Richard Philipson for helping out with this monster of a post.

Running the metavero.com AMI on your laptop

Huh? How can the Cloud (aka Amazon Web Services) run on the Ground (aka your laptop)? How do you run an Amazon Machine Image outside of Amazon’s cloud? Specifically, can the recently released 11.1.2.2 AMI be run on my laptop?

Read

on, and all will be revealed. It’s a bit involved but oh so doable.

And most definitely one of my more satisfying hacks although to be

honest, I am just combining ideas other people came up with. So that

would be in the best tradition of programmers then, e.g., I am stealing

their work and applying it to my problem. :)

One thing to note – this will be a multipart blog post as there are many steps. I want to spread out the work give you time to digest this in bits.

A special note of thanks

Dan Pressman,

Essbase wizard extraordinaire, was my QC guy on this post and

contributed a lot towards figuring out how we (him, me, and you too)

could get the licensing straight. Yes, it’s a blog about Essbase

Hacking, but not that kind of hacking.

In

any case, this is sort of a long blog post (60 plus pages in Word which

is where I initially type up all this stuff – fear not there are a ton

of pictures but still there is a lot going on here) with a lot

of steps (sorry, what we’re doing ain’t all that easy). In line with

testing good practices, he who writes the solution shouldn’t test it.

Dan has it all working, and well. I will explain the differences

between his eventual solution and mine later.

What skills will you learn?

As

I’ve written before, I view these posts in two ways: a way of

disseminating useful information, and a way of forcing self-training.

The first point is hopefully obvious; the second is a simple fact of my

(and maybe yours) life – I am in an industry that constantly challenges

me to learn, I am somewhat lazy (you should see the state of my office –

the floor is almost visible through the papers), and doing unusual but job-related things makes me really learn how to do something.

And

that’s why I’m so excited about this post from a skills perspective.

Here’s a high level list of what you will understand if you follow

through this whole thing:

- Amazon Web Services (AWS) Cloud architecture including Elastic Compute Cloud (EC2) and Simple Storage Service (S3)

- Interacting between the Cloud and your local machine

- VMWare conversion tools

- EPM 11.1.2.2’s resource requirements

- Windows licensing (more complicated than you might think)

Why oh why would you want to do this?

With

that introduction out of the way, here’s why you will likely want to

give this a try, or at least read through the adventure that is running

the Cloud on the Ground.

Cost

The

initial impetus for this is mostly (at least on my part) all about

cost. I love AWS, aka the Cloud, to pieces, but I can spend $150 or

more per month on it. That’s acceptable, but I am a one-man band, and I

have to try to watch every penny.

Ease of EPM installation

That

is the beauty of this approach – I am not a good installer of EPM

products (to put it all so mildly), but I know someone who is. He has very generously shared his knowledge with the EPM community so we can all play with 11.1.2.2

in the Cloud. If I take John’s work and bring it down to my laptop,

aka the Ground, I have a competently installed EPM system on my laptop

and I don’t have to install anything EPM-related whatsoever.

Remote access

When

you use the Cloud, you must have an internet connection. Port 3389

(that’s what Terminal Services aka Remote Desktop uses by default)

blocked by your company’s firewall? Too bad. On a plane, or somewhere

in public without a good internet connection? Too bad, yet again.

Running the Cloud on the Ground only requires your laptop and a battery big enough to keep it going.

Security

I

am 100% uncomfortable (and you should be, too) putting real anything

out in the Cloud, at least with the AMI as it is provided. Yes, AWS EC2

is inherently secure, assuming you haven’t used a 0.0.0.0/32 rule in

your security groups, but John’s AMI is really not fit for production

data until it has been hardened. Think VPNs, hardened usernames and

passwords, etc., etc. In other words, real IT (you know, what you or

your clients have in house) security. I’m not suggesting that putting

this on a laptop makes it more secure (think of stolen laptops, key

logging software, Trojans, etc.) but there is at least some physical

security on a laptop (the Ground) that simply isn’t there when it’s on

the Cloud. I still wouldn’t put sensitive financial information on my

laptop but I might not have a fit if I had less sensitive data on my

local hard drive.

It’s a cool hack

This is Cameron’s blog for Essbase Hackers, right? Isn’t this is most certainly a hack? ‘Nuff said.

Why would you not want to do this?

Despite

the cost savings, ability to connect anywhere, and overall geeky

coolness of this approach, there are definitely some reasons why you

would not want to do this.

Performance

I used to think the Cloud’s AWS m2.xlarge instances weren’t all that fast. Oh, how wrong I was. They are blazingly fast, at least compared to my poor, almost three year old Ground-based laptop. Blindlingly

fast in fact, with as much room for expansion as anyone could ever

want. Maybe if I had a bigger laptop I wouldn’t think this way, but

with the old Latitude 6500 I have, trust me, the Cloud is many times

faster. Yes, this is a great reason to go buy a faster laptop, but I am

trying to get as much time out of this thing as I possibly can. On the

other hand, watching Task Manager pegged at 100% a lot of the time is

getting old.

Capacity

As

I wrote above, the Ground requires a big laptop, or at least one more

capable than mine as I am really pushing its limits. At the bare

minimum, 64 bits (you would be surprised how many people still have 32

bit laptops – it isn’t going to be enough by a country mile), eight

gigabytes (16 would be much, much better, even though the EPM instance

is a compact deployment) of RAM, at least 100 gigabytes, preferably

more, of free disk space, and a dual CPU. These are the bare minimum

specs – believe me when I tell you that my system is usable, but only

just. It can be very, very, very slow at times.

Sharing

When

you run the 11.1.2.2 AMI on the Ground, you are pretty much making this

a box that only you can talk to. Yes, you could stick it on a LAN and

make it available that way, but even if you have a powerful laptop, it’s

not going to be a substitute for a true server.

Flexibility

One

of the many great things about the Cloud is that you can scale servers,

clone them, and do all sorts of awesome things with them that only the

Cloud or an incredibly indulgent IT department brings. None of those

possibilities are there with a laptop. You must keep that in mind when

going down this path.

Time

The

converting, compressing, downloading and enabling of all of this to

work on the Ground is not exactly quick. Some of this is down to how

fast your internet connection is – I have a DSL connection and fast it

is not. It literally took me two days to download the compressed VM

from the web. Did I mention I had to set up a way to host those files

(I will reveal how to do that below)?. For sure I wouldn’t attempt this

on a cell phone plan.

Cost

Huh?

Isn’t the Ground supposed to be cheaper? Well, only if you already

have a valid Windows 2008 R2 Datacenter license. That’s right, the

license that Amazon has with Microsoft is not

valid when it’s transferred to your laptop. Sooner or later (like

within three days) you will start getting warnings about Windows not

being activated. You are going to need that license to do the

activation. And don’t think you can find cracked licenses (this is a

hacker’s, not a pirate’s blog)

The retail cost is $2,389 and that’s without the ability to actually connect a client to it (aka CALs).

Yes, that is a little eye-watering and yet another reason why AWS is

so awesome in so many ways. However, if you’ve read this far you are

determined to do it, and there are cheaper ways of getting a legitimate

license. Beyond hitting your IT department up for a license, or your

consulting company management, some legal alternatives are Microsoft’s Action Pack, MSDN, TechNet, and Bizspark. I’m sure there are other ways to do it as well but those are the ones I know of.

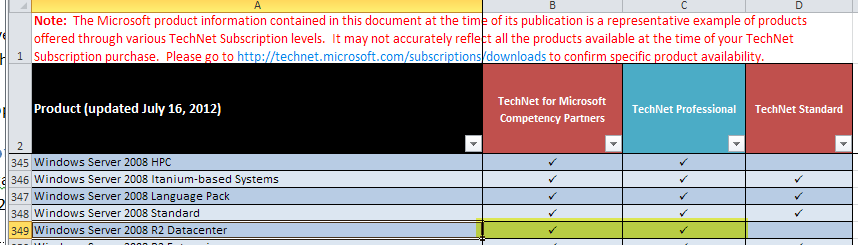

NB

– The cheapest way to legitimately get a 2008 R2 Datacenter license is

TechNet Professional. You must qualify for the license, but once done,

the current cost is $349 for the first year and $249/year renewal.

Here’s a snippet of the Technet Product List. Note that you must

either have TechNet Professional or TechNet for MS Competency Partners

(that’s how Dan and I qualify) to get 2008 R2 Datacenter. One

other thing to note – TechNet grants licenses for evaluation purposes

only. Apply the key to a production environment and you are in

violation of your license. I will let you figure out how much risk you

enjoy, but any prudent business avoids legal nastygrams as much as

possible.

Also, don’t forget that you have to have a laptop sufficient large and powerful enough to run all of this as detailed above.

Don’t

be scared off by the upfront cost as ultimately I think the Ground is

the cheapest way to run the 11.1.2.2 AMI, but just know that there are

many reasons to stick with the Cloud. I have a bunch of interesting

things I want to test with Essbase 11.1.2.2 that I will share in the not

too distant future – for sure I’ll POC on my laptop but right after

that I will be going back to AWS.

One

other thing to note – TechNet grants licenses for evaluation purposes

only. Apply the key to a production environment and you are in

violation of your license. I will let you figure out how much risk you

enjoy, but any prudent business avoids legal nastygrams as much as

possible.

Also, don’t forget that you have to have a laptop sufficient large and powerful enough to run all of this as detailed above.

Don’t

be scared off by the upfront cost as ultimately I think the Ground is

the cheapest way to run the 11.1.2.2 AMI, but just know that there are

many reasons to stick with the Cloud. I have a bunch of interesting

things I want to test with Essbase 11.1.2.2 that I will share in the not

too distant future – for sure I’ll POC on my laptop but right after

that I will be going back to AWS.

Required resources to make this all happen

With this stuff, you are on your own

- An ability to follow directions

- An Amazon Web Services account

- A desire not to incur AWS charges

- A 64 bit PC/laptop with at least eight (but very preferably) gigabytes of RAM

- A fast internet connection

- Lots of disk space (about 100 free gigabytes, but more would likely be better)

- A valid Windows 2008 R2 Datacenter license

- Patience

Here are the tools you need to grab from the web:

Whew,

that’s an awful lot of perquisites, isn’t it? And yet what I am going

to show you is pretty awesome, if I do say so myself. Ahem.

Converting the AMI

The

trick to this is to use VMWare’s VCenter Converter software. It is not

actually meant specifically for AWS but is instead a way to virtualize any

Windows (and Linux) machine. The fact that you will virtualize a cloud

machine is irrelevant to VCenter Converter, although it is geeky cool.

Give yourself some drive space

Unless

you started up the 11.1.2.2 AMI with a really big drive, and even then

for best performance, you will need to create a drive to do all of your

converting, zipping, etc. work. As with so many things in AWS, it’s

really quite simple. This is all assuming that the 11.1.2.2 AMI is up

and running.

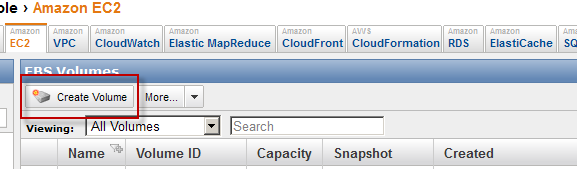

Create a new volume

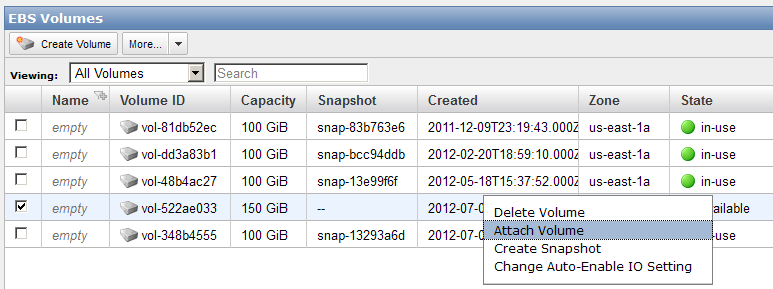

In the EC2 interface, create a new volume by clicking the eponymous button:

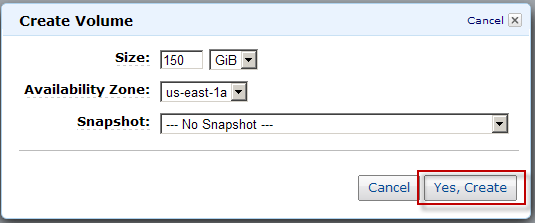

Set the size

This one is set for 150 GB.

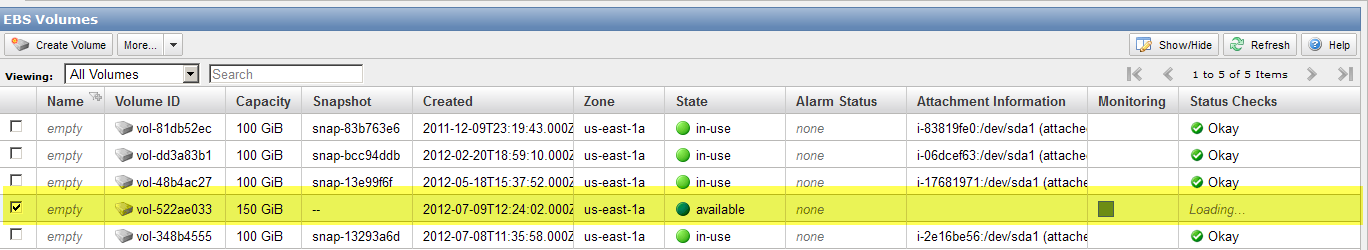

Go check to make sure it’s done

This is generally quite fast. The State needs to be “available”.

Attach it to your running instance

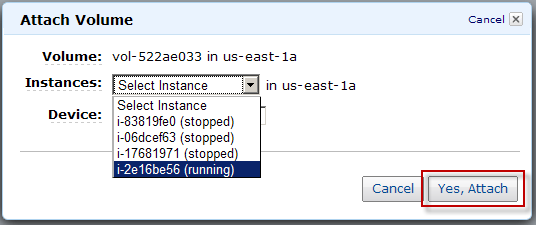

This is a two step process:

Format that drive

So your Windows box now has a new hard drive. Before Windows can address it, it needs to be initialized and formatted.

Initializing the drive

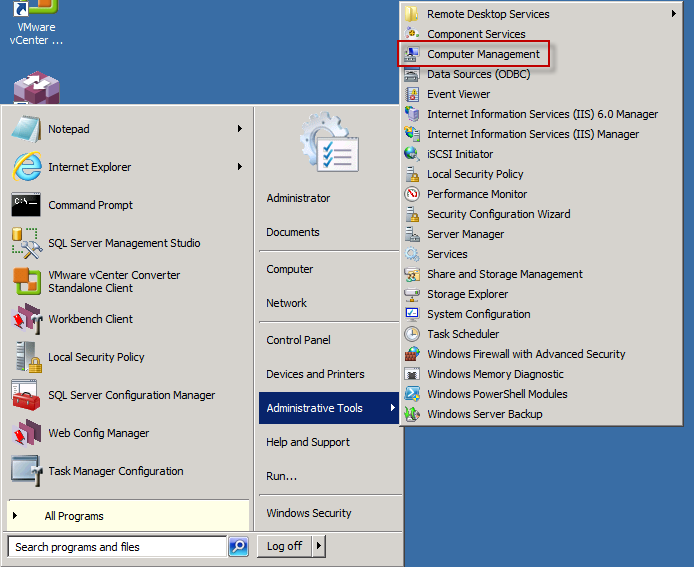

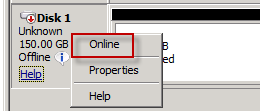

NB – You can also just go to Start->Run->diskmgmt.msc. Below is the graphical way.

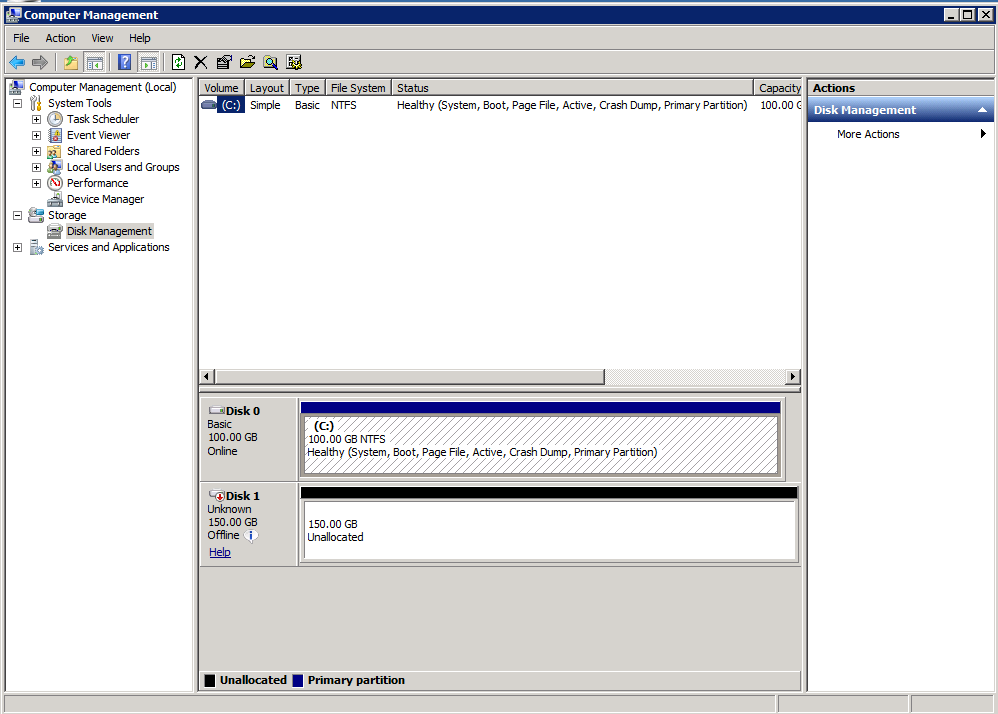

Open up Computer Management. Then Disk Management

Then Disk Management Set the drive online.

Set the drive online.

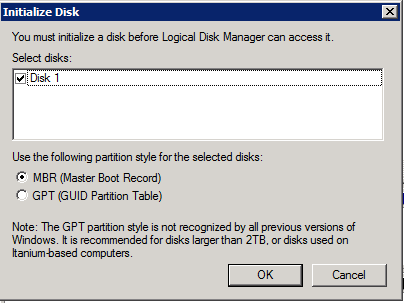

Then initialize.

Then initialize.

Take the Initialize Disk defaults.

Take the Initialize Disk defaults.

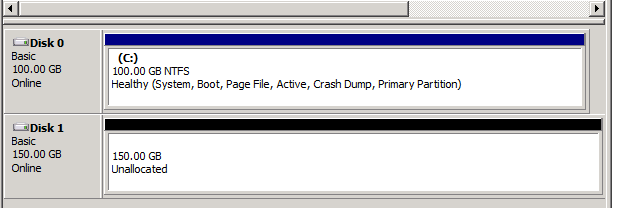

You now have an initialized, but unformatted drive.

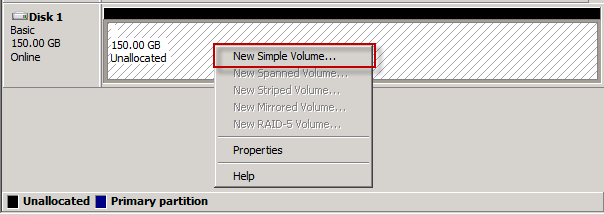

You now have an initialized, but unformatted drive. Right click on that Unallocated drive and select New Simple Volume.

Right click on that Unallocated drive and select New Simple Volume. Follow the wizard, again taking the defaults.

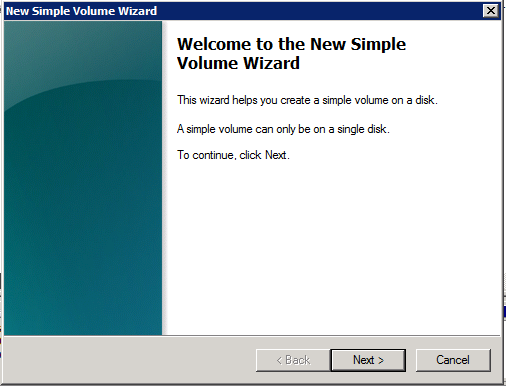

Step 1 – Beginning the Simple Volume Wizard

Follow the wizard, again taking the defaults.

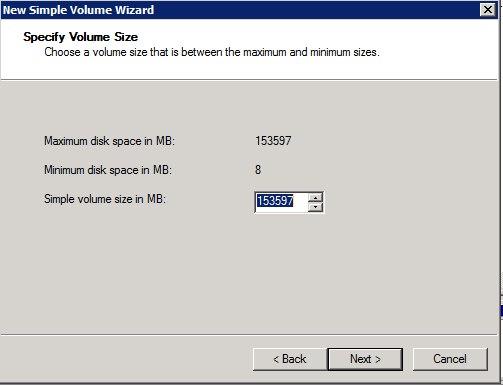

Step 1 – Beginning the Simple Volume Wizard Step 2 – Define how big it should be (go for all of the volume space)

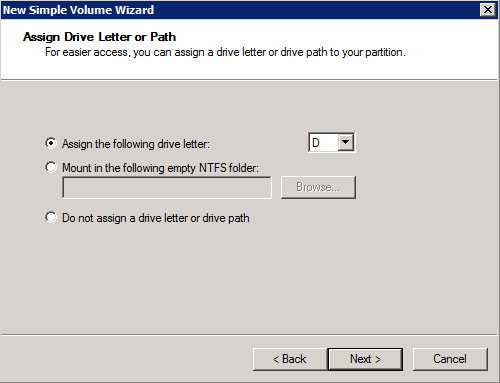

Step 2 – Define how big it should be (go for all of the volume space) Step 3 – Assign a drive letter.

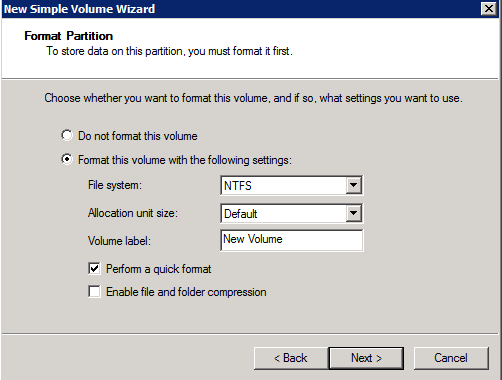

Step 3 – Assign a drive letter. Step 4 – Format it, using the quick format option

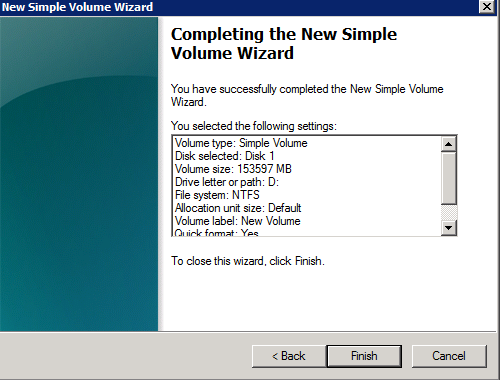

Step 4 – Format it, using the quick format option Step 5 – You are done!

Step 5 – You are done!

Click on Finish and you should see this:

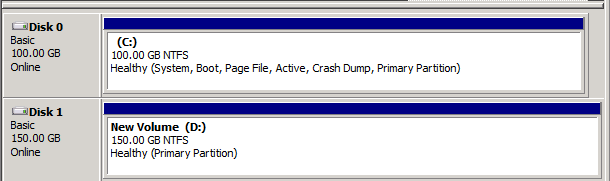

Click on Finish and you should see this: Here it is in Explorer

Here it is in Explorer

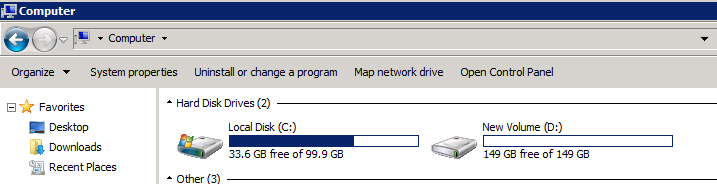

Before you convert, get rid of unnecessary files

There’s

a c:\media directory that contains all of the install binaries. Unless

you want to keep them around, get rid of this directory as it is 24.5

gigabytes that you will be compressing and then downloading (slowly) to

your laptop. Dan caught this and his download was 15 gigabytes,

compressed; mine was 29.

Converting via VCenter Converter

I’ve already given you the download link. You will be installing this on the AWS EC2 instance.

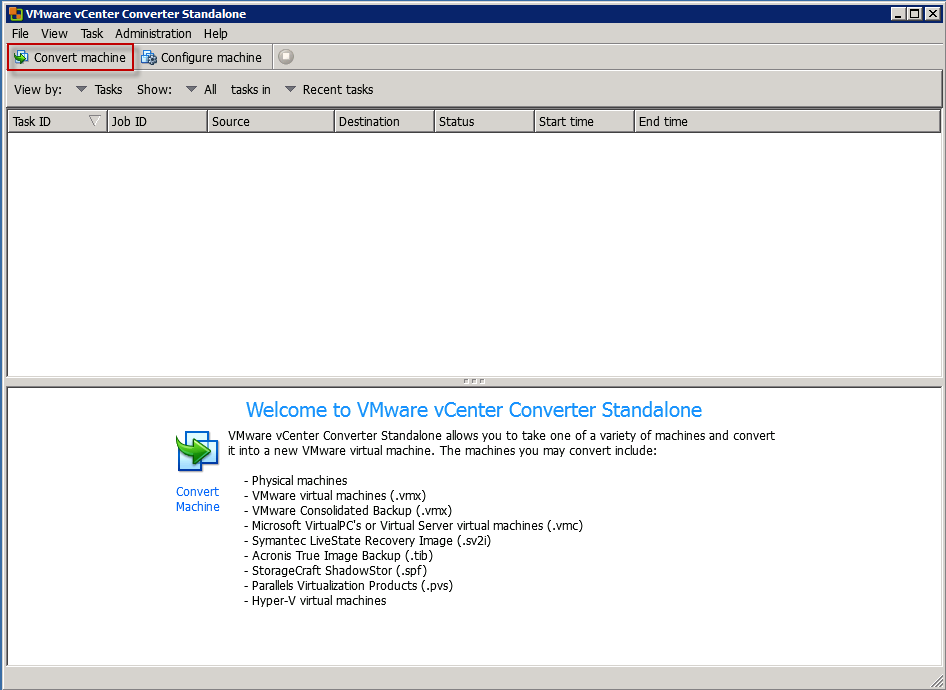

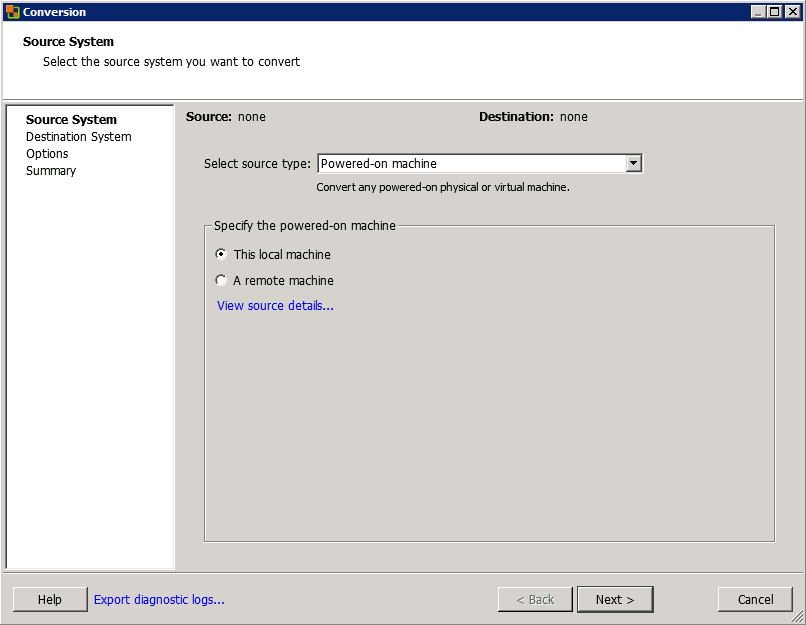

Once installed, launch it. This should be what you see. Click on Convert Machine to get the process going. Select the powered-on machine.

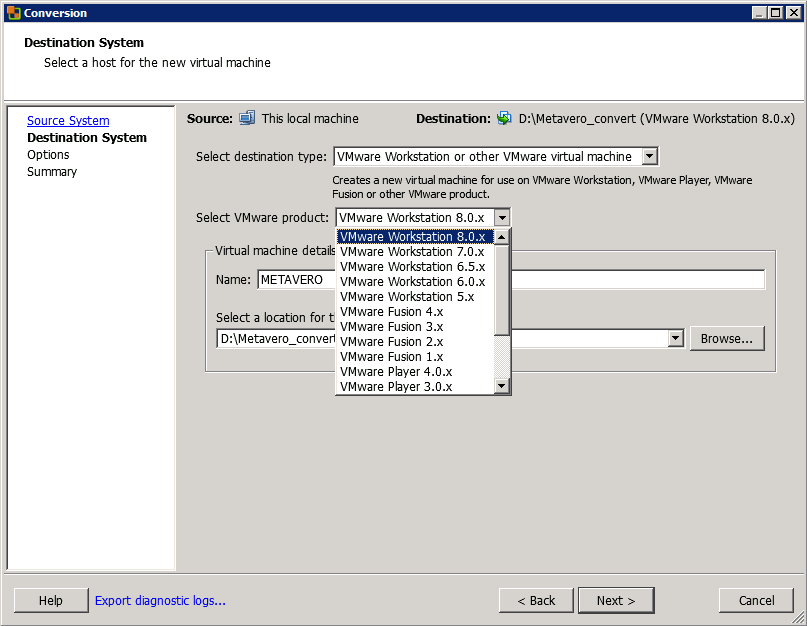

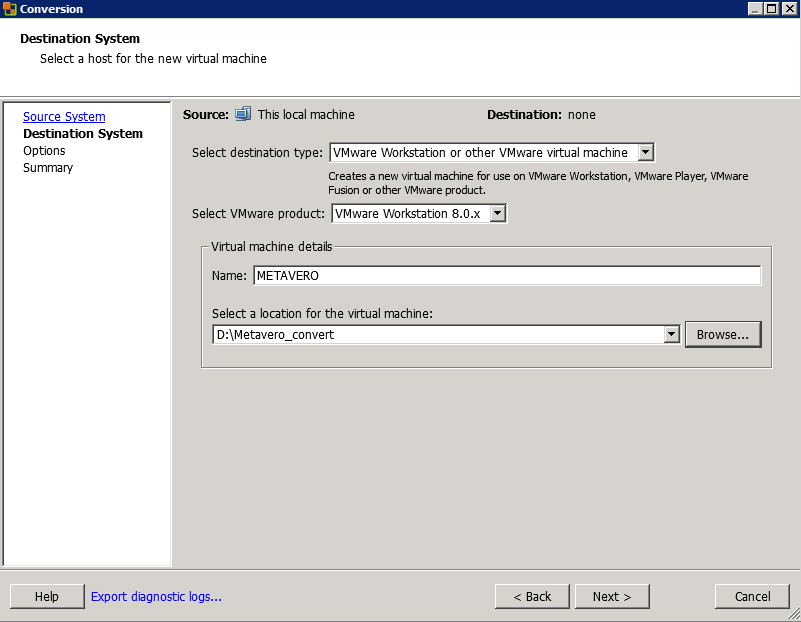

Select the powered-on machine. Select

the destination type and VMWare release, along with a name and a target

folder. Yes, you can make all of this work with the free VMWare Player

although I have not tried doing it with that tool.

Select

the destination type and VMWare release, along with a name and a target

folder. Yes, you can make all of this work with the free VMWare Player

although I have not tried doing it with that tool. In

my case I’m running Workstation 8, so that’s the release I picked but

you can run this with older releases of the tool. Click on Next.

In

my case I’m running Workstation 8, so that’s the release I picked but

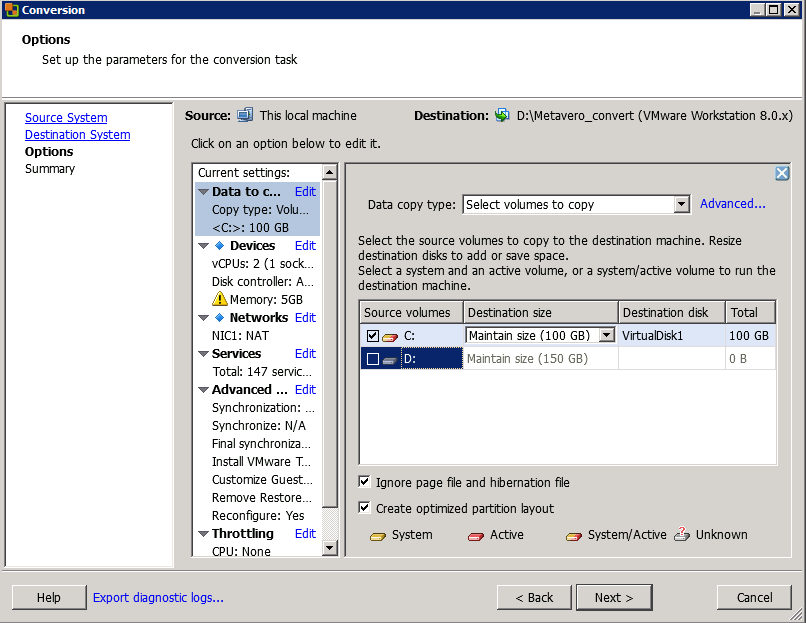

you can run this with older releases of the tool. Click on Next. Get

rid of the D drive – there’s no point in moving it across to your

laptop as all it will contain is a copy of your VM. Which will reside

on your VM. Which is confusing.

Get

rid of the D drive – there’s no point in moving it across to your

laptop as all it will contain is a copy of your VM. Which will reside

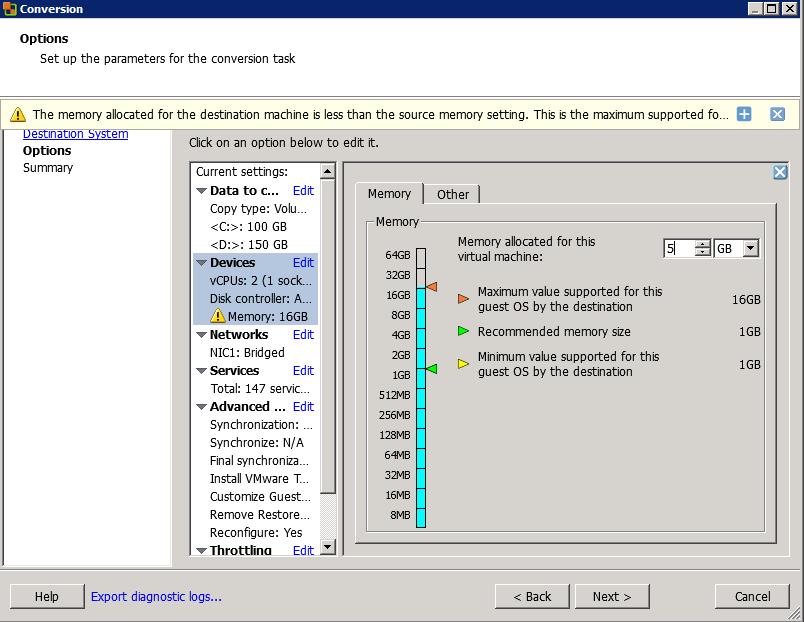

on your VM. Which is confusing. Set

the memory size – in my case, it’s going to be quite a bit smaller than

the 17 gigs that an EC2 m2.xlarge instance provides. Also, although I

didn’t do it in this screen shot you should not convert the D drive – all you want is C.

NB

– I am showing this a bit out of order so the D drive is still

selected. Regardless of me not being able to demonstrate a sequential

process, you need to get rid of the D drive.

Set

the memory size – in my case, it’s going to be quite a bit smaller than

the 17 gigs that an EC2 m2.xlarge instance provides. Also, although I

didn’t do it in this screen shot you should not convert the D drive – all you want is C.

NB

– I am showing this a bit out of order so the D drive is still

selected. Regardless of me not being able to demonstrate a sequential

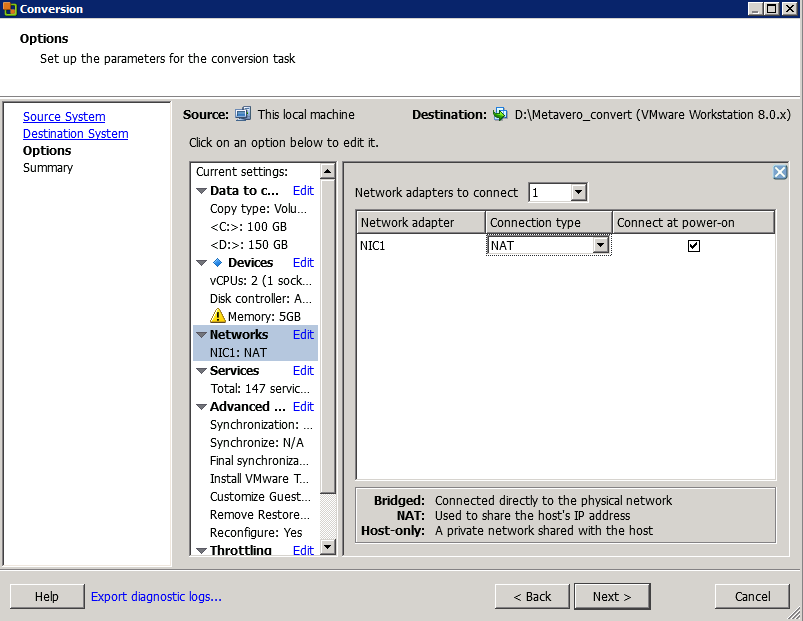

process, you need to get rid of the D drive. Set the network to NAT.

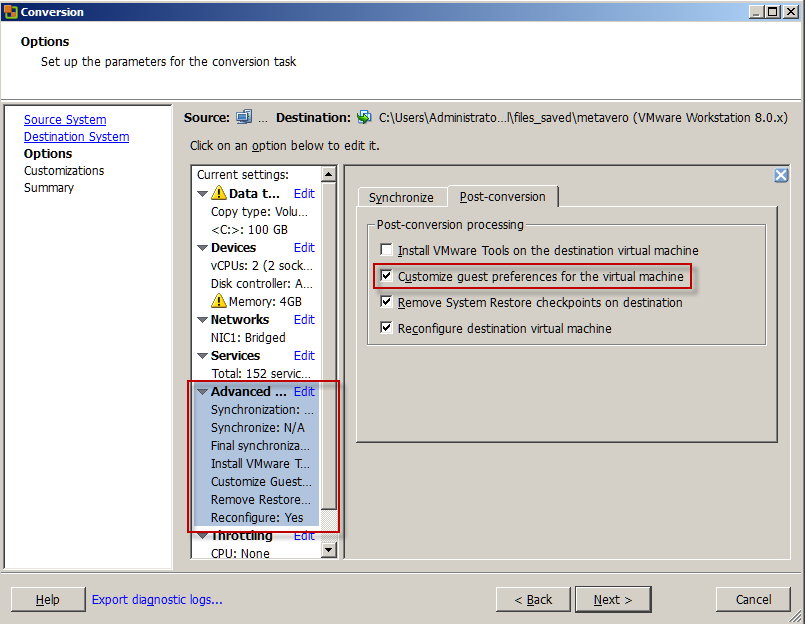

Set the network to NAT. Click on the Advanced section above, then click on “Customize guest preferences for the virtual machine”.

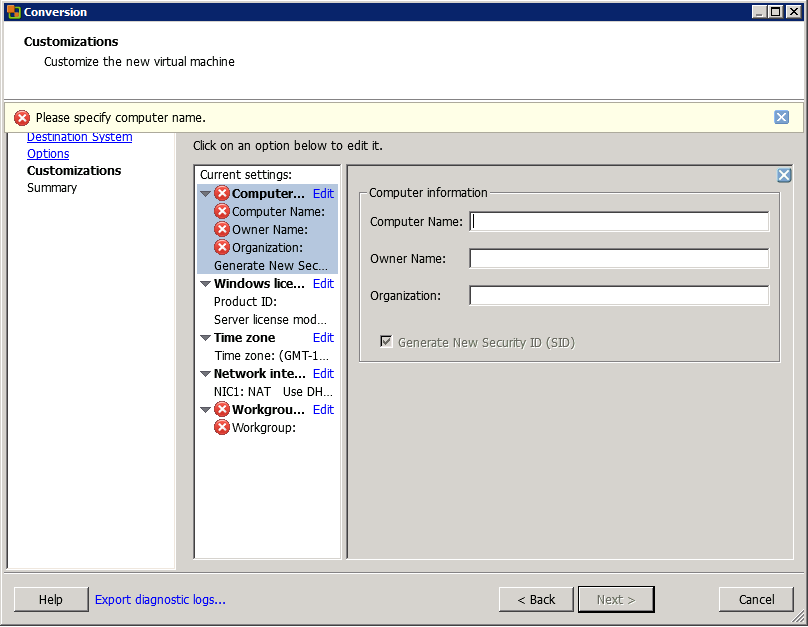

Click on the Advanced section above, then click on “Customize guest preferences for the virtual machine”. That will get you to the Customizations section.

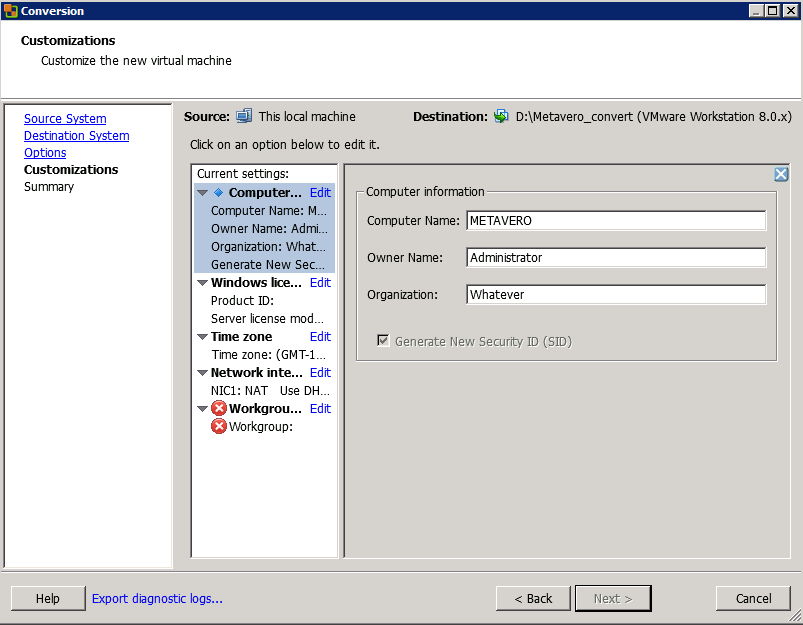

That will get you to the Customizations section. I went with these settings (they seem pretty logical):

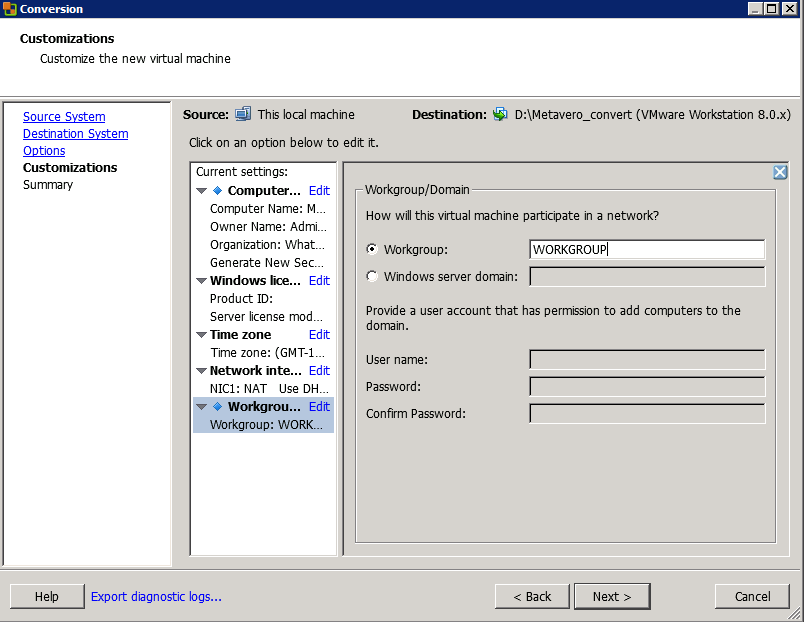

I went with these settings (they seem pretty logical): Set the Workgroup.

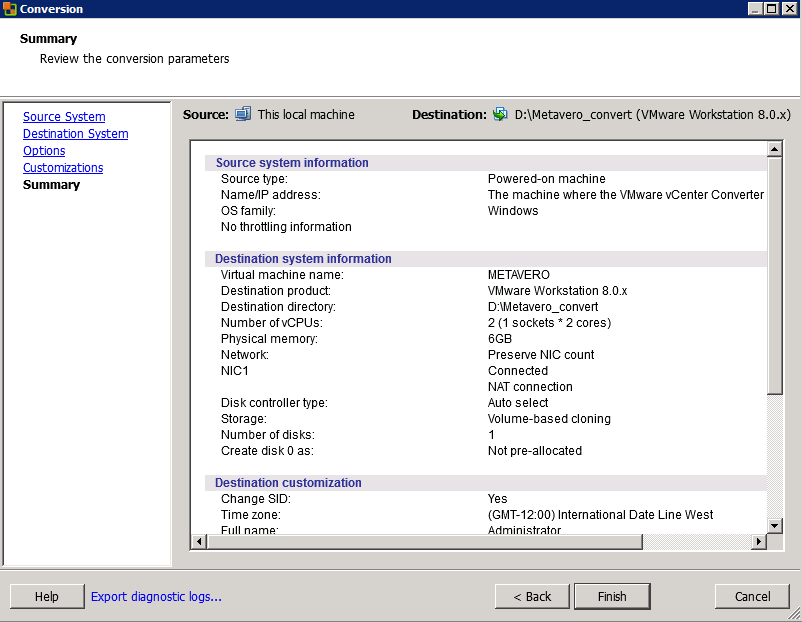

Set the Workgroup. Review the settings and Go Man Go.

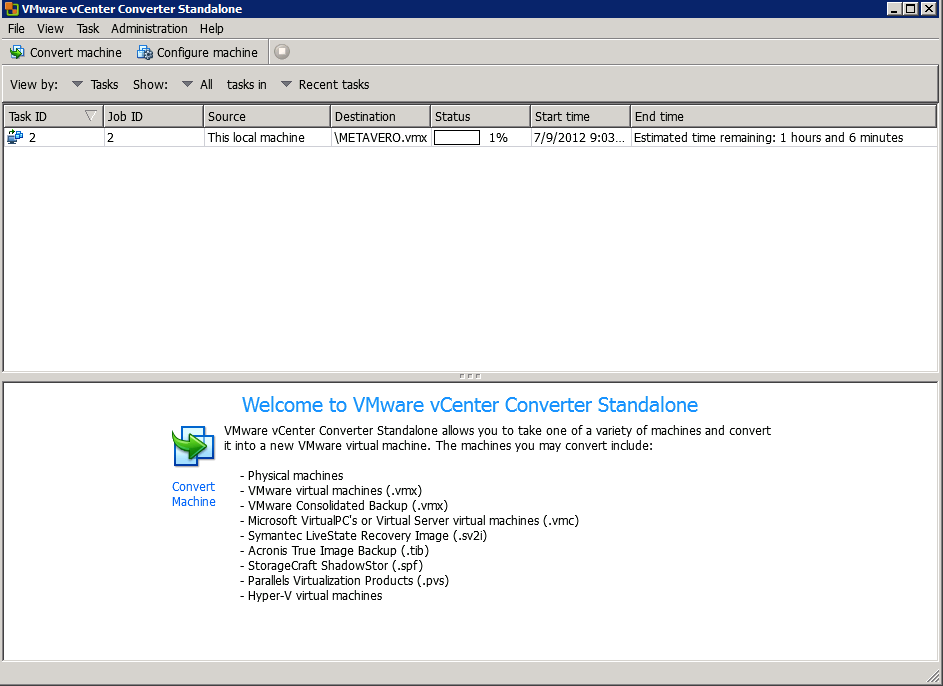

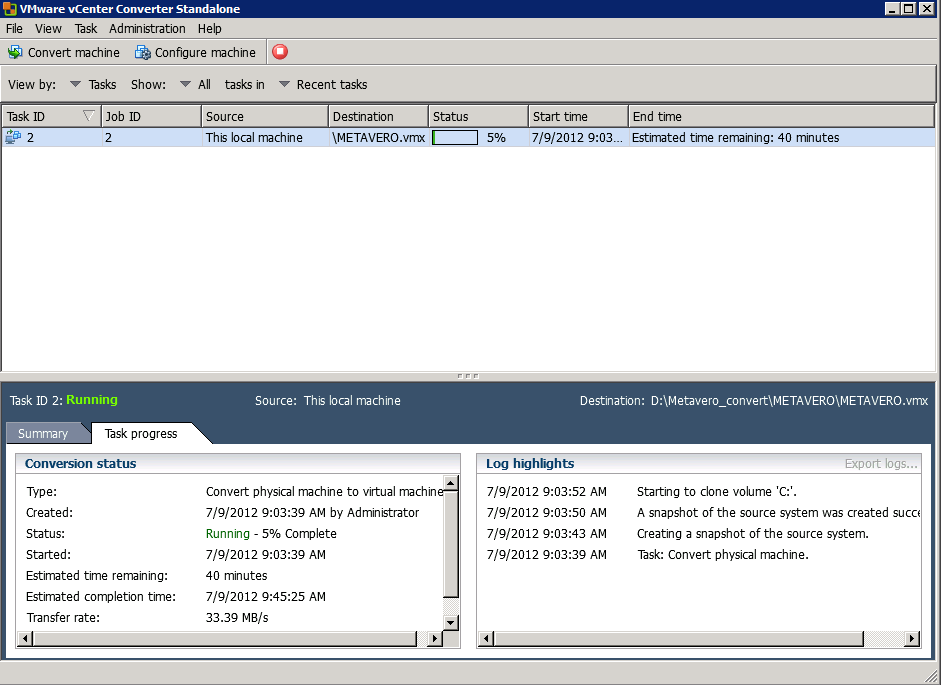

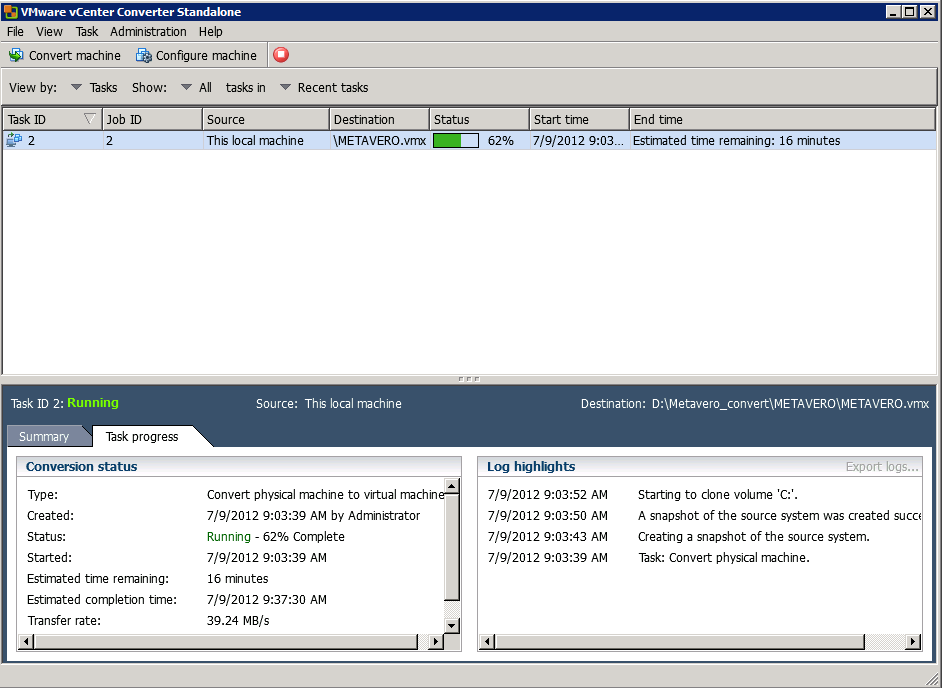

Review the settings and Go Man Go. Now the conversion process begins.

Now the conversion process begins. You can see the process beginning by clicking on the task line.

You can see the process beginning by clicking on the task line. It takes a while.

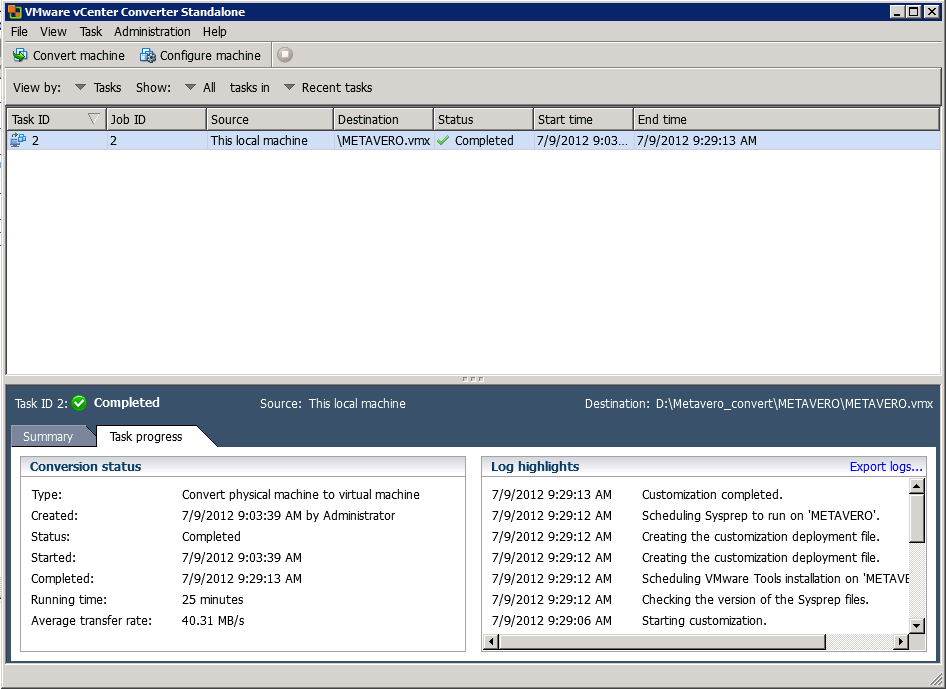

It takes a while. But it will finish.

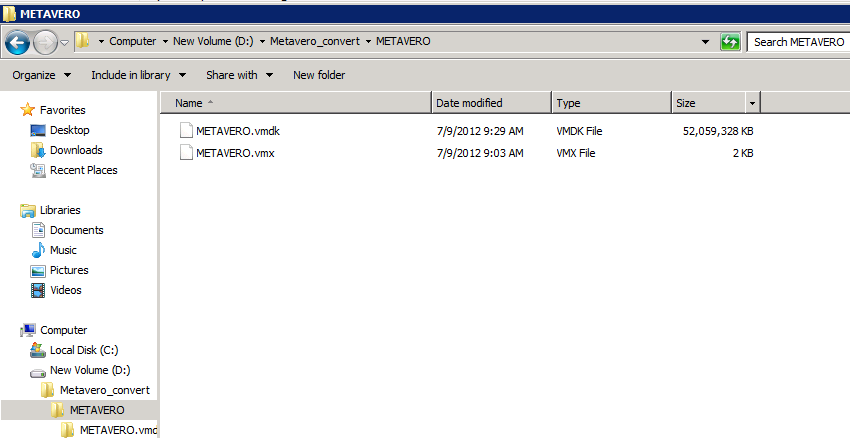

But it will finish. And here it is in Windows Explorer.

And here it is in Windows Explorer.

So where does this leave you?

Aren’t

cliffhangers great? I suppose only if the reader has hung around till

the end. But you’re here, hopefully, so what do we have and where do we

go from here?

At this point you have :

- Added a hard drive in AWS (and seen how trivially easy it is to do)

- Added and formatted that drive in Windows

- Downloaded and installed VMWare’s VCenter

- Converted your metavero.com 11.1.2.2 AMI to a format that VMWorkstation (or whatever) can read

I

wish I could tell you that all you now need to do is download the

VMWare machine to your local drive and off you go. Alas, it’s not that

easy, but the next and final post will take you through all of the steps

that Dan and I painfully worked through. It honestly isn’t that bad

but there are a few twists and turns.

Hope you’ve enjoyed the hack!