Prequel to the cool stuff

So in part 1, I reviewed how to create a HBR, substitute in local variables to read a Planning form’s Run Time Prompts (RTP), and suggested that it might be worthwhile to read this post.

The code sample I used doesn’t do any fancy allocation or calculation. It just aggregates the database using form POV members.

You may be scratching your head, thinking, “Why on earth is he banging on and on about this? Just issue an AGG(“Entity”, “Segments”) statement and be done with it.”

Zoiks! If I wrote one line of code to consolidate a database I would be out of a blog post topic – This Is A Bad Thing.

Secondly, Why Would I Want To Do That? (Ex-Comshare employees/customers/partners know of whom I speak when I write that.)

Because thirdly, and most importantly to you, I can make that database aggregate much, much more quickly.

The Trouble with AGG

Other than sounding like a misquote from a sketch in “Monty Python and the Holy Grail”, what is wrong with an “AGG” when applied to the Entity and Segments dimensions?

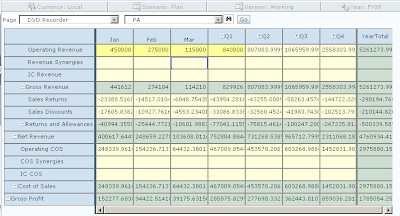

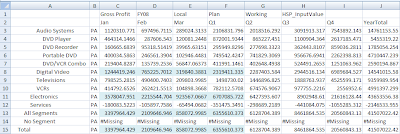

Think about it in the context of the form as shown below:

But if I wrote:

AGG(“Entity”, “Segments”) ;

I would be also be aggregating a New England state and a totally obsolete electronic media, if there was any Gross Profit data for those two members. After all, AGG is kind of a blunt axe.

(For you Intelligent Calc lovers out there, yes, I could use the blocks’ clean or dirty status to not calculate upper level data that already exists, but good luck doing that in a real world Planning application. There will be clean/dirty blocks all over the place and it is very difficult to keep this approach from going pear shaped.)

Having disposed of the Intelligent Calc option, while an AGG statement will certainly come up with the right result, Why Would You Want To Do That when you only changed data at the PA and DVD Recorders intersection? Why aggregate data combinations for all of the other level zero Entity and Segment combinations that haven’t even changed?

(These questions do have a point, so bear with me.) You might answer, what else am I to do? How do I consolidate my dimensions if I don’t use AGG or CALC DIM?

Tricky, innit?

Here’s the trick – you don’t need to calculate the dimension, you only need to calculate the relevant hierarchies. That’s what the calc script above shows and what your HBR can do for your forms.

@IANCESTOR is your friend

@IANCESTOR is your BFF? That I couldn’t say. But it’s going to be your HBR pal from now on because, if you think like Essbase, you can make Essbase only calculate what you want. *This* is hacking Essbase.

Let’s review the way Essbase aggregates a database, per our dear friend, the Database Administrators Guide (DBAG). For the below section we’re going to ignore the Consol database to go along with the DBAG. Just substitute Entity for Product and Segments for Market; they are the first and second consolidating sparse dimensions.

How Essbase aggregates a database

Per the ever-scintillating DBAG, Block Storage Option (BSO) databases calculate dimensions in the following order (we are only going to concern ourselves with sparse, aggregating dimensions), “Sparse dimensions (in the order they display in the database outline)”. See http://download.oracle.com/docs/cd/E10530_01/doc/epm.931/html_esb_dbag/dcacaord.htm and the “Member Calculation Order” section if you need to cite chapter and verse.

Okay, we know the order that Essbase is going to calculate the dimensions. What about the order of the members within the dimensions? This is answered by the “Block Calculation Order” section :

“Essbase calculates blocks in the order in which the blocks are numbered. Essbase takes the first sparse dimension in a database outline as a starting point. It defines the sparse member combinations from this first dimension.”

Using My Very Favorite Essbase Database In The Whole Wide World (MVFEDITWWW), i.e., Sample.Basic, this means that:

“In the Sample Basic database, Product is the first sparse dimension…Product has 19 members…Therefore, the first 19 data blocks in the database are numbered according to the calculation order of members in the Product dimension.”

The DBAG goes on to say:

“The other sparse dimension is Market. The first 19 data blocks contain the first member to be calculated in the Market dimension, which is New York…The next member in the Market dimension is Massachusetts. Essbase creates the next 19 data blocks for sparse combinations of each Product member and Massachusetts.”

This is the important bit:

Essbase continues until blocks have been created for all combinations of sparse dimension members for which at least one data value exists.

Guess what, we have just reviewed how Essbase calculates sparse dimensions from the first sparse dimension to the last one, by dimension and within each dimension. In essence, block by block.

1) Product is aggregated for every level 0 Market member (where data exists in Market – Essbase is smart enough not to calculate combinations that don’t exist).

2) Then Market gets aggregated by every Product (that exists, there’s Essbase being smart again).

Okay, but so what?

If we jump back to the Consol database, you may recall that I claimed you don’t need to calculate all of Entity, do you? Nope. Just the member in the form POV and its ancestors.

You also don’t need to calculate all of Segments. No, sir. Just the Segment that is in the POV and its ancestors.

This is the trick/gimmick/optimization/clever bit/thing you maybe already knew long ago and are now totally disappointed by. Sorry if you’re in the last category – I swear the next post will be better.

How do you do this?

Simple. Let’s assume that the Entity is PA and the Segment is DVD Recorder. Remember, you only need to calculate the relevant branches of the hierarchy. The other level zero/upper level members haven’t changed, so there’s no profit in recalculating them.

Use @IANCESTORS in combination with FIX statements to make Essbase aggregate:

1) The PA ancestor tree for DVD Recorders.

2) The DVD Recorder ancestor tree for the PA ancestor tree.

The results of the above versus that AGG/CALC DIM is exactly the same, only the focused aggregation does it in less than a quarter of the time.

Correction

1) Here’s the form with the original data:

2) Let’s round the numbers up and send to Essbase:

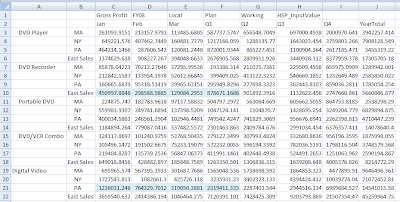

3) Switching to Excel, the blue cells show the data that should be updated. This sheet is easy peasy, as it just shows aggregated dynamic Accounts.

4) And here it is where we expect to see it aggregated one level up, by Entity and Segment.

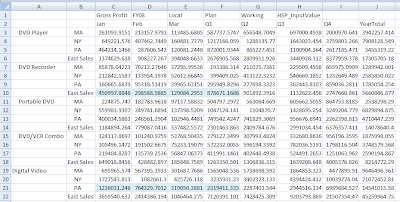

5) And now let’s look at the when the focused aggregation HBR is run.

Notice that it doesn’t matter if we do or don't aggregate MA, NY, DVD Player, Portable DVD, and DVD/VCR combo. Only PA and DVD Recorders changed, so only their parents need be aggregated. In other words, only aggregate parents whose children's values change, and leave the rest be.

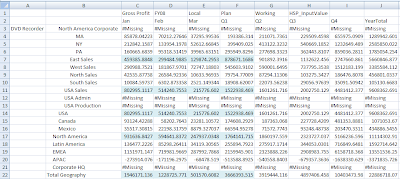

6) Here it is rolled up by Entity.

7) And rolled up by Segment.

The Payoff

What does this mean from a performance perspective (faster=better)?

Looking at the Plansamp.log file, we can see that plain old AGG took almost 7 seconds:

Where the focused aggregation didn’t even make it to 2.5 seconds.

In the Real World

Just yesterday I benchmarked a real Planning HBR with the AGG versus focused aggregation approach. How about 180 seconds versus 24 seconds? Now we’re talking 1/8 of the time. This is powerful medicine.

YMMV; these are the results I got for my client’s Essbase database. Every database is different, so my performance improvement won't necessarily map to your application. Regardless, the above technique can make “big” Planning applications fast and give you the run on save performance your users demand.

See, I love Hyperion Business Rules.

See you next time.

35 comments:

My favorite part of this post is your correct usage of the "proof of the pudding" quotation. Not that the rest of the post is lacking, now. Not that at all! I just liked that part best. Mmmmmm. Pudding.

Very interesting post. How would you do more than two dimensions? Thanks.

Freddie,

It's not hard to expand the idea to apply to as many dimensions as you need. The assumption is that each dimension is a page dimension on the form and therefore you can get the single member you've hit from the run-time prompts. For each dimension you need to roll, lock down the rest with FIXes and run @ANCESTORS on that dimension. Then lock down on another slice and do it again for the next dimension, and so on.

-- Joe

Freddie -- Joe has it right.

Let's say that Period was part of this mix (I am reaching here, as PlanSamp is not exactly the world's most complicated Planning/Essbase db).

The code might look like:

FIX("Local", "FY08", "Plan", "Working", "HSP_InputValue")

FIX([varSegment], [varEntity])

@ANCESTORS([varPeriod]) ;

ENDFIX /* FIX([varSegment], [varEntity]) */

FIX(@IANCESTORS([varPeriod]), [varSegment])

@ANCESTORS([varEntity]) ;

ENDFIX /* FIX(@IANCESTORS([varPeriod]), [varSegment]) */

FIX(@IANCESTORS([varPeriod]), @IANCESTORS([varEntity]))

@ANCESTORS([varSegment]) ;

ENDFIX /* FIX(@IANCESTORS([varPeriod]), @IANCESTORS([varEntity])) */

ENDFIX /* FIX("Local", "FY08", "Plan", "Working", "HSP_InputValue") */

It can get a little ugly, but it really isn't that hard to follow.

Regards,

Cameron Lackpour

Does this assume that your entity parents are dynamic calc for this new "agg" technique to work? If not I am still confused how it works but will take it!

Does this "agg" logic assume that the parents in the entity dimension are dynamic?

>>Does this "agg" logic assume that the parents in the entity dimension are dynamic?

Nope. The Entity dimension is aggregated by the code.

The trick here is to think like Essbase and then only calculate the branches of the hierarchy that are needed. It can be very, very fast.

If you did a full AGG("Entity") ; you'd get the same results as the technique I outline in this post, but you would likely (unless you use Intelligent Calc and there aren't many brave enough to do that in a Planning app) calculate many, many, many blocks that don't have a value change along with the handful that do change value.

So long as Entity is in the POV and can be read off the form, this approach works a treat.

Regards,

Cameron Lackpour

P.S. If I can ever get to it, I am going to do a post just like this one but in Calc Manager. Any interest out there?

Just tried this out and it works great except for the top level intersection of both members it will not calculate. Say I have "Product A" and "Market A" and I want to aggregate then to their parents "Products" and "Markets" If I do the fix and look at the intersection "Product A"/"Markets" or "Market A"/"Products" it works but I want to see "Products"/"Markets" and it does not seem to aggregate this value....Any more tricks for this one?

Dear Anon,

>>If I do the fix and look at the intersection "Product A"/"Markets" or "Market A"/"Products" it works but I want to see "Products"/"Markets" and it does not seem to aggregate this value

So it's Products->Markets that doesn't have a data value? And this is from a Planning app?

As an aside, your users can't see that topmost cross dimensional member. Admins can, and I suspect you're one.

Is it possible that the dimensions themselves are Label Only instead of Store or Never Share? The @IANCESTORS function goes all the way to the top.

Here's a test -- if you just do a normal AGG of the two dimensions, do you see the data there?

Regards,

Cameron Lackpour

Hello Cameron - as you suspect this is a planning application and I am the admin. We have a couple of parent levels in theses dimensions and it appears at any of the parent intersections I do not see any data, its not specific to just the highest members. Its just strange, if I look at Level 0->Parent member or Parent Member->Level 0 I see data but when I do parent member->parent member no data. The dimensions themselves were set to 'never share' so I changed to store and still no luck. I made sure all members are Any more thoughts? I was testing on small section of the DB and my calc went from 24 seconds to 3 seconds so I would love to utilize this functionality!

Anon,

Do me a favor and send me a message on LinkedIn. I want to take a look at your outline and the HBR script you wrote. It's making my head hurt trying to diagnose this via the Comments page. I (or you) will post the results once it's figured out.

Regards,

Cameron Lackpour

Cameron,

I have seen used this BR logic successfully in 2 applications over last year. I have also started preaching this as a bible (with correct reference to source) to aggergations in planning.

Now FFwd to now. I am working on an application which has 4 aggregating sparse dimensions A (400 members),B (1500 members),C (3000 members),and D (7000 members). If I use AGG(A,B,C,D), the calc time is 10 seconds but if I use @IANCESTORS approach, aggregation takes 135 seconds. Why would this be happening?

I used following aggreating logic

FIX(@IANCESTORS([B]),@IANCESTORS([C]),@IANCESTORS([D]))

@ANCESTORS([A]);

ENDFIX

FIX(@IANCESTORS([A]),@IANCESTORS([C]),@IANCESTORS([D]))

@ANCESTORS([B]);

ENDFIX

FIX(@IANCESTORS([A]),@IANCESTORS([B]),@IANCESTORS([D]))

@ANCESTORS([C]);

ENDFIX

FIX(@IANCESTORS([A]),@IANCESTORS([B]),@IANCESTORS([C]))

@ANCESTORS([D]);

ENDFIX

The aggregation order is from A to D because A is first and D is last in dimension order. I believe order is critical in this approach but I am not able to figure out what is causing BR to take more time. The block size is about 60k.

>>I have seen used this BR logic successfully in 2 applications over last year. I have also started preaching this as a bible (with correct reference to source) to aggergations in planning.

^^^You're very kind. I came up with it on my own, but alas, it is not unique. Other people were doing it when I first discussed the approach at Kaleidoscope 2009, but not very many. That's what inspired me to write about the approach. It ought to be SOP at every Planning class.

Re your code issue, I think you're using @IANCESTORS in the FIX too early. Try this:

FIX([B], [C], [D])

@ANCESTORS([A]) ;

ENDFIX

FIX(@IANCESTORS([A]), [C], [D])

@ANCESTORS([B]) ;

ENDFIX

FIX(@IANCESTORS([A]), @IANCESTORS([B]), [D])

@ANCESTORS([C]) ;

ENDFIX

FIX(@IANCESTORS([A]), @IANCESTORS([B]), @IANCESTORS([C]))

@ANCESTORS([D]) ;

ENDFIX

I think you'll find this is much, much faster. The scope of the blocks that get touched gets larger as you walk the dimensions, and yes, calculation order and the order of the FIX statements is very important. In your example, you touch the same number of blocks each time.

Regards,

Cameron Lackpour

I've tried to use this method but it won't seem to work for me. I only need to aggregate the entity dimension and it is on the page by so I have my fix statement and trying to agg using @ANCESTORS([Department]); but it doesn't do anything. AGG("Entity"); is working a treat (obviously)

Thanks for a good post! I have been also using a variation of this, but in reverse.

Since I have more than a simple aggregation attached to one hierarchy (internal elimination logic), I didn't want the full hierarchy to be "consolidated", rather only up to the parent the user was granted access to. @IDESCENDANTS([RTP]); of the parent selected in POV as RTP worked well in this case.

Regarding @ANCENSTORS();, have you ever heard of conflicts with users simultaneously writing to the same parent?

Joachim,

>>Regarding @ANCENSTORS();, have you ever heard of conflicts with users simultaneously writing to the same parent?

^^^There aren't truly simultaneous writes to the same block -- Essbase won't (and if you think about it, can't) allow it. Essbase locks the block when the value is being written and then releases it when complete -- that block can then get locked by the second (or third or fourth or whatever) calc process that is trying to write to the block until it too updates the value and releases it; the process repeats itself ad infinitum until all the calc processes are complete. Check out the DBAG -- the theory behind Essbase block locking is covered exhaustively.

The focused aggregation approach isn't any more or less risky than CALC ALL because multiple CALC ALLs (assuming Intelligent Calc is not part of the mix) are going to behave in exactly the same manner.

This is why Planning apps often have nightly calcs that force a full aggregation to make sure that everything's tickety-boo.

Regards,

Cameron Lackpour

Joachim,

>>Regarding @ANCENSTORS();, have you ever heard of conflicts with users simultaneously writing to the same parent?

^^^There aren't truly simultaneous writes to the same block -- Essbase won't (and if you think about it, can't) allow it. Essbase locks the block when the value is being written and then releases it when complete -- that block can then get locked by the second (or third or fourth or whatever) calc process that is trying to write to the block until it too updates the value and releases it; the process repeats itself ad infinitum until all the calc processes are complete. Check out the DBAG -- the theory behind Essbase block locking is covered exhaustively.

The focused aggregation approach isn't any more or less risky than CALC ALL because multiple CALC ALLs (assuming Intelligent Calc is not part of the mix) are going to behave in exactly the same manner.

This is why Planning apps often have nightly calcs that force a full aggregation to make sure that everything's tickety-boo.

Regards,

Cameron Lackpour

Dear Cameron,

I refer to your post below.

http://camerons-blog-for-essbase-hackers.blogspot.com/2009/06/why-i-hate-and-love-business-rules-part.html

In Step 3 of the above post, we associate a database outline. I have a planning application which refreshes back to an Essbase server of the same name.

Therefore I am not able to view the Planning application under the Planning Servers list in step 3 of your post. I can only see it under Essbase servers.

Which is why I am not able to view this Business Rule when I try to access it through the Planning application.

It works fine when launched from Essbase though. Any suggestions?

Cheers,

Sahil

Interesting and really useful

Hi Cameron,

My situation is that I want to basically do an @IRDESCENDANTS(nodemember); to aggregate only one node... but it seems to not be working. I don't see any references anywhere (except from you) that call into doubt the reliability of @IRDESCENDANTS... but it seems like it's not always aggregating correctly. So I figured i'd find the first level 0 member of that 'nodemember' and do an @ALLANCESTORS on it... but can't get to only one level zero member. any suggestions?

Jon,

>>I don't see any references anywhere (except from you) that call into doubt the reliability of @IRDESCENDANTS

^^^I don't think I call into question the usage of @IRDESCENDANTS -- I have never (well, my memory can be pretty bad, so maybe I have) used that function.

OTOH, @ALLANCESOTRS does work a treat. You're in an old blog post -- check out the companion/replacement blog posts for Calculation Manager. In it, I show how to use @ALLANCESTORS successfully.

http://camerons-blog-for-essbase-hackers.blogspot.com/2012/03/why-i-hate-and-love-calculation-manager.html

And the one with @ALLANCESTOR code examples:

http://camerons-blog-for-essbase-hackers.blogspot.com/2012/03/why-i-hate-and-love-calculation-manager_18.html

Contact me via LinkedIn if you can't get those to work. Maybe it's time for a quick blog post on @IRDESCENDANTS. It is a different perspective on calculating a portion of the db although in a Planning context I'd like to hear why an ancestor approach isn't better.

Regards,

Cameron Lackpour

Hi Cameron, do you know how this would work if I had to use RTP that contains multiple members, being that @ANCESTORS only accepts one member as argument?

I'm thinking of, where A and B are RTPs, and A can be multiple members.

FIX([B])

@ANCESTORS([A]) ;

ENDFIX

FIX(@IANCESTORS([A]))

@ANCESTORS([B]) ;

ENDFIX

Any ideas??

Forgive the rudimentary nature of this question, but I'm a newbie to Hyperion and Essbase. Can you tell me how simply invoking @ANCESTORS(), which is documented as simply returning a member list, triggers the calculation of the rollups? I understand how calling the AGG() function--an explicit calculation--results in the updating of the outline.

Best Regards,

^-v^-

Forgive the rudimentary nature of this question, but I'm a newbie to Hyperion and Essbase. Can you tell me how simply invoking @ANCESTORS(), which is documented as simply returning a member list, triggers the calculation of the rollups? I understand how calling the AGG() function--an explicit calculation--results in the updating of the outline.

Best Regards,

^-v^-

Vini,

I know, throwing an @ANCESTORS(membername) ; doesn't at first blush seem to force an aggregation of a member and its ancestors but Run The Code And See.

It really does work.

It may make you feel a little better if I tell you I have never seen it documented -- I saw the code somewhere, wondered what it did, tried it, and was amazed.

Regards,

Cameron Lackpour

Cameron, I am encountering a long AGG time, and I want to AGG 3 dimensions. One is in the Page Section of the form and the Other Two are in the Rows of the form. I don't think I can use a Global Variable to hone in on the dimensions in the Rows. To add to the complexity, one of the Rows is leveraging a User Variable to display the Children of the UV to pop those members into the Rows. Maybe there is a way, just hoping you could help. Thanks, Tom

Tom,

What you ask can be done.

See this post:

http://camerons-blog-for-essbase-hackers.blogspot.com/2013/10/row-driven-focused-aggregations-in.html

Regards,

Cameron Lackpour

I created the following script on your comment. It takes 5.476 seconds to run when i only entered dat for 3 accounts for one dept only.

________________

FIX({Scenario},{Version},{Year})

FIX({Department}, "No Vendor (requires supporting details)", "NoRiskProject", "No Region")

@ANCESTORS("No Product") ;

ENDFIX

FIX(@IANCESTORS("No Product"), "No Vendor (requires supporting details)", "NoRiskProject", "No Region")

@ANCESTORS({Department}) ;

ENDFIX

FIX(@IANCESTORS("No Product"), @IANCESTORS({Department}), "NoRiskProject", "No Region")

@ANCESTORS("No Vendor (requires supporting details)") ;

ENDFIX

FIX(@IANCESTORS("No Product"), @IANCESTORS({Department}), @IANCESTORS("No Vendor (requires supporting details)"), "No Region")

@ANCESTORS("NoRiskProject") ;

ENDFIX

FIX(@IANCESTORS("No Product"), @IANCESTORS({Department}), @IANCESTORS("No Vendor (requires supporting details)"), @IANCESTORS("NoRiskProject"))

@ANCESTORS("No Region") ;

ENDFIX

ENDFIX

______________________________

I also created a rule with hard coded script and it took it 0.312 secs to run for same subset of data

____________________________

FIX("Budget","FY15","1st Pass - Budget")

AGG("Department","Product","VendorHospital","RiskProject","Region");

ENDFIX

___________________________

Please suggest what needs to be fixed

And I also did things in the order of outline in essbase

A. Product

B. Department

C. VendHos

D. RiskProj

E. Region

I have updatecalc off; but why do my script take 15 minutes to run the first time then timing starts going down and by the 4th or 5th time I am running time goes down 40 seconds, which is the best timing i can get. It does everyday when i run the it the first time.

I have updatecalc off; but why do my script take 15 minutes to run the first time then timing starts going down and by the 4th or 5th time I am running time goes down 40 seconds, which is the best timing i can get. It does everyday when i run the it the first time.

Perhaps it is builds blocks the first time you run it

Perhaps it is builds blocks the first time you run it

Perhaps it is builds blocks the first time you run it

Is it possible to combine multiple scripts into one script.If possible what sort of issues going to raise?

Post a Comment